New FAQ says Apple will refuse pressure to expand child safety tools beyond CSAM

Apple has published a response to privacy criticisms of its new iCloud Photos feature of scanning for child abuse images, saying it "will refuse" government pressures to infringe privacy.

Apple's new child protection feature

Apple's suite of tools meant to protect children has caused mixed reactions from security and privacy experts, with some erroneously choosing to claim that Apple is abandoning its privacy stance. Now Apple has published a rebuttal in the form of a Frequently Asked Questions document.

"At Apple, our goal is to create technology that empowers people and enriches their lives -- while helping them stay safe," says the full document. "We want to protect children from predators who use communication tools to recruit and exploit them, and limit the spread of Child Sexual Abuse Material (CSAM)."

"Since we announced these features, many stakeholders including privacy organizations and child safety organizations have expressed their support of this new solution," it continues, "and some have reached out with questions."

"What are the differences between communication safety in Messages and CSAM detection in iCloud Photos?" it asks. "These two features are not the same and do not use the same technology."

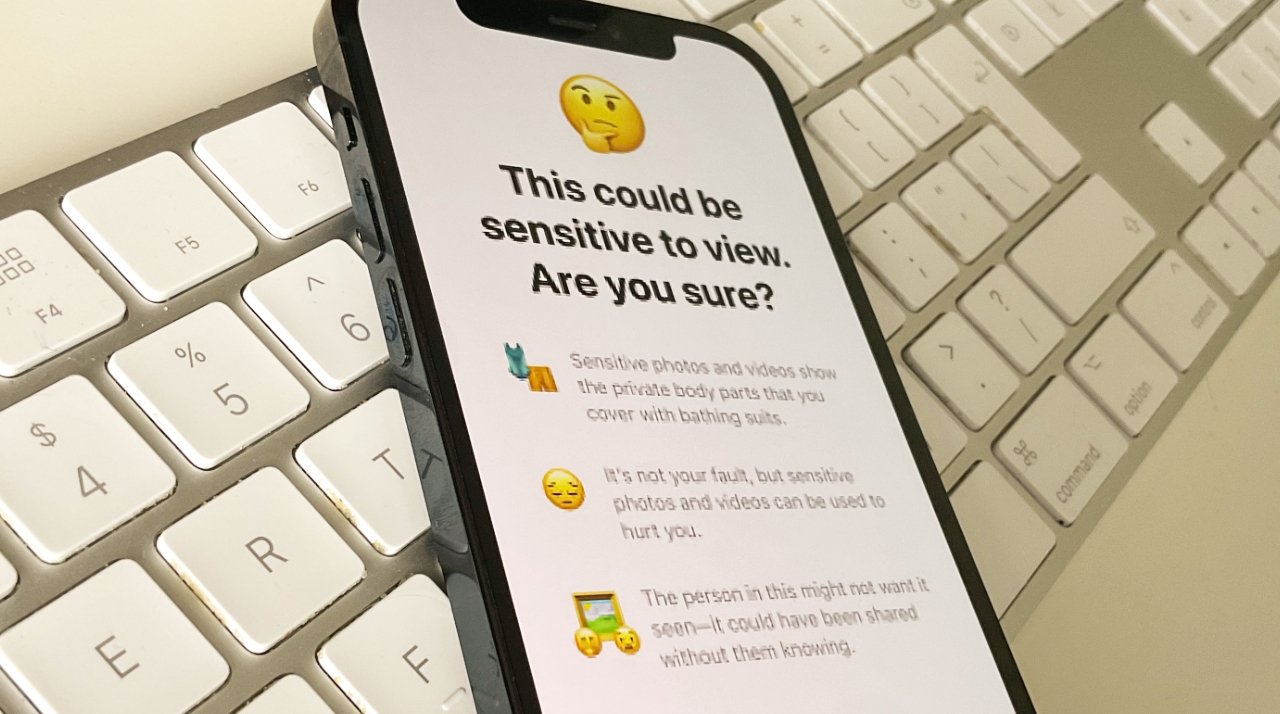

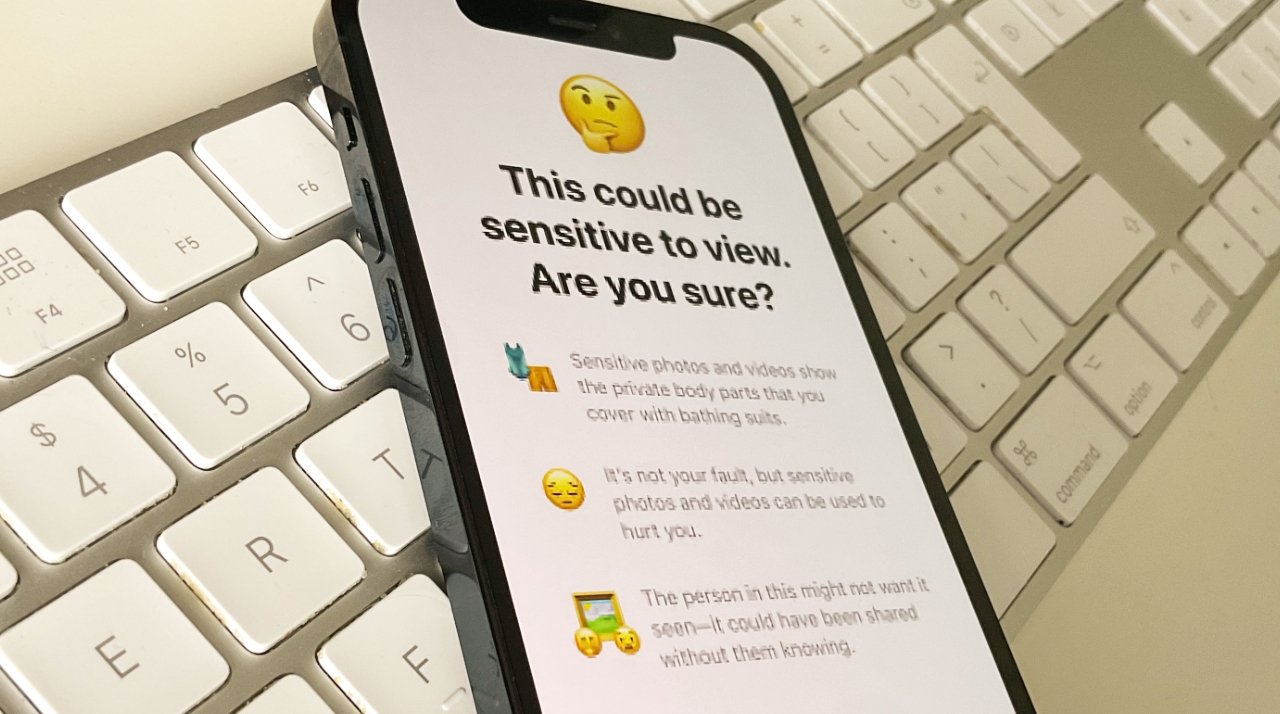

Apple emphasizes that the new features in Messages are "designed to give parents... additional tools to help protect their children." Images sent or received via Messages are analyzed on-device "and so [the feature] does not change the privacy assurances of Messages."

CSAM detection in iCloud Photos does not send information to Apple about "any photos other than those that match known CSAM images."

Much of the document details what AppleInsider broke out on Friday. However, there are a few points explicitly spelled out that weren't before.

First, a concern from privacy and security experts has been that this scanning of images on device could easily be extended to the benefit of authoritarian governments that demand Apple expand what it searches for.

"Apple will refuse any such demands," says the FAQ document. "We have faced demands to build and deploy government-mandated changes that degrade the privacy of users before, and have steadfastly refused those demands. We will continue to refuse them in the future."

"Let us be clear," it continues, "this technology is limited to detecting CSAM stored in iCloud and we will not accede to any government's request to expand it."

Apple's new publication on the topic comes after an open letter was sent, asking the company to reconsider its new features.

Second, while AppleInsider said this before based on commentary from Apple, the company has clarified in no uncertain terms that the feature does not work when iCloud Photos is turned off.

Read on AppleInsider

Apple's new child protection feature

Apple's suite of tools meant to protect children has caused mixed reactions from security and privacy experts, with some erroneously choosing to claim that Apple is abandoning its privacy stance. Now Apple has published a rebuttal in the form of a Frequently Asked Questions document.

"At Apple, our goal is to create technology that empowers people and enriches their lives -- while helping them stay safe," says the full document. "We want to protect children from predators who use communication tools to recruit and exploit them, and limit the spread of Child Sexual Abuse Material (CSAM)."

"Since we announced these features, many stakeholders including privacy organizations and child safety organizations have expressed their support of this new solution," it continues, "and some have reached out with questions."

"What are the differences between communication safety in Messages and CSAM detection in iCloud Photos?" it asks. "These two features are not the same and do not use the same technology."

Apple emphasizes that the new features in Messages are "designed to give parents... additional tools to help protect their children." Images sent or received via Messages are analyzed on-device "and so [the feature] does not change the privacy assurances of Messages."

CSAM detection in iCloud Photos does not send information to Apple about "any photos other than those that match known CSAM images."

Much of the document details what AppleInsider broke out on Friday. However, there are a few points explicitly spelled out that weren't before.

First, a concern from privacy and security experts has been that this scanning of images on device could easily be extended to the benefit of authoritarian governments that demand Apple expand what it searches for.

"Apple will refuse any such demands," says the FAQ document. "We have faced demands to build and deploy government-mandated changes that degrade the privacy of users before, and have steadfastly refused those demands. We will continue to refuse them in the future."

"Let us be clear," it continues, "this technology is limited to detecting CSAM stored in iCloud and we will not accede to any government's request to expand it."

Apple's new publication on the topic comes after an open letter was sent, asking the company to reconsider its new features.

Second, while AppleInsider said this before based on commentary from Apple, the company has clarified in no uncertain terms that the feature does not work when iCloud Photos is turned off.

Read on AppleInsider

Comments

Riiiight.

Translation: Here at Apple, we might have created a back door, but we promise to only ever use it for good. Pinky swear!

The only people that think this is something new are people that never read the user agreements for cloud services.

How quickly Apple has forgotten they caved into a foreign government power mandating changes so they could keep selling in China? Sacrificing privacy and security of their user’s iCloud storage to be stored in China for profits over the customer privacy - shame Apple. I think they have proven how ‘steadfast’ they actually are… Maybe this will be the last iOS device.

And scanning with on-device learning of your photo library for any reason is an invasion of privacy without permission. Would you let someone into your house to look through you photo albums without permission? It’s the same at the end of the day. A warrant is the current requirement to do that! How can a tech giant replace legislation and human rights? Yes, and it is just hash scanning now - but tech giants don’t have a good track record on privacy!

Moreover, the fact that iCloud in China is not totally under Apple's control is probably a significant reason for Apple to rely on on-device processing to do these checks, as it means that it is totally under Apple's control.

Apple has made a precedent with that. Now many governments will take that precedent as template and will try to turn it into law. But in the past Apple had resisted to the request of developing a special iOS to break into criminals' phones as that would constitute a precedent.

Apple has clearly shown governments they are willing to scan data directly on a user’s device. That’s not inconsequential.

What if Apple decides next year to join the ‘misinformation’ bandwagon, for the ‘good of the people’? What if the year after that they decide to aid in politics through monitoring communications about political issues and delivering that information to ‘independent’ political organisations, for the ‘good of the people’?

Those are hypotheticals of course, but Apple should keep far and away from anything that smells like where it could lead. An authoritarian government (which our government is smelling more and more like), or a tech industry controlling people, is worse for a population than CSAM.

Wellll, the other guy being worse usually depends on where you're standing. From a software developer's point of view, it's not as clear.

Google (as one example of the other guy) also scans the for CSAM pictures, but they do it on the server. What they don't do is install spyware on your phone to scan it before it reaches the server.

Now, in the future, this may change; Google may have no choice but to do the same thing, because the advantage that Apple's system has for law enforcement is that it is now pretty much impossible to encrypt pictures before they're scanned and uploaded.

But if Google does do the same thing, they have a couple of advantages over Apple.

Android is open-source, which means the backdoor code can be scrutinised and tested for correctness. The other thing, which will annoy a number of governments, is that folk will be able to build phones which disable it or leave it out altogether, so the only reliable way to find CSAM images (or whatever they're looking for) is to continue scanning server-side.

The other potential weak point in Apple's scheme is the backdoor itself. One mistake that Cupertino makes time and again, is forgetting to check for data that can cause problems in the operating system. We've had at least two instances of text message strings that crash the phone on arrival. Pretty easy to avoid; just send the message to folk you don't like, not to your mother.

Apple is now going to be using a database that they are taking from a third party and installing on every iPhone, iPad and Mac.

Running the scan server side, the worst that can happen is that the match brings down a server.

Running the scan on-device, the worst that can happen is that it crashes every phone running it.

And having "steadfastly refused" those demands in the past, you've now done what they want voluntarily. And as soon as a government passes a law requiring the addition of something else, you'll comply, just as you have all along.

Putting one in before you're told to strikes me as a little bit dumb.

My guess is that they've been offered a deal: implement the backdoor and the anti-trust/monopoly stuff goes away.

Then it would be daft of the government to force Apple to allow alternative app stores, because then they prevent folk from installing software that might bypass the checks.

Under the current system, the Chinese can avoid the problem simply by not storing stuff in iCloud. Apple even warned them when they were switching over so they had plenty of time to make other arrangements.

This is different.

This piece of software (let's not be coy; it's spyware, plain and simple – it is rifling through your shit looking for other shit) is running on the phone. This means that it can be activated to report on any picture, document or video, regardless of what cloud service it is attached to.

Now, people will now jump in and say, "Well, let's just wait until it happens shall we?"

But some things you know are a bad idea without waiting and seeing. I sometimes think it might be fun to lick a lamppost in sub-zero temperatures, just to see what would happen. But then, on second thoughts, I usually just assume the worst without testing the hypothesis.

You seem to have a finger deep inside Google; do they have something like this, or do they just do the server side scan. I haven't been able to find any reference to a similar setup at any other tech behemoth.