Popular iOS apps use Glassbox SDK to record user screens without permission [u]

A number of popular iOS apps paying data analytics services for so-called "session replay" technology have the ability to record and play back user interactions, often without asking permission, according to a new report.

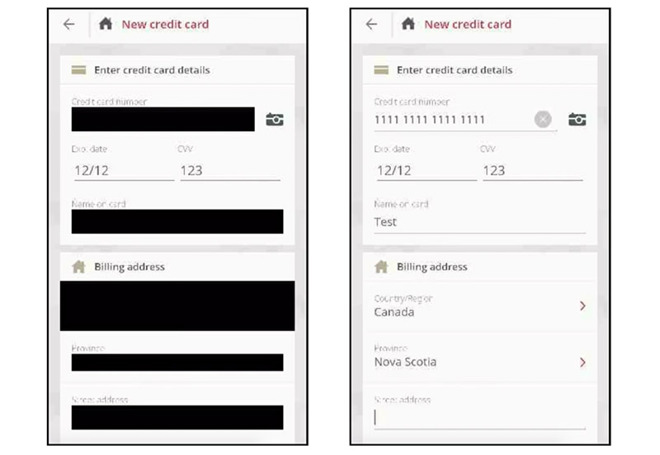

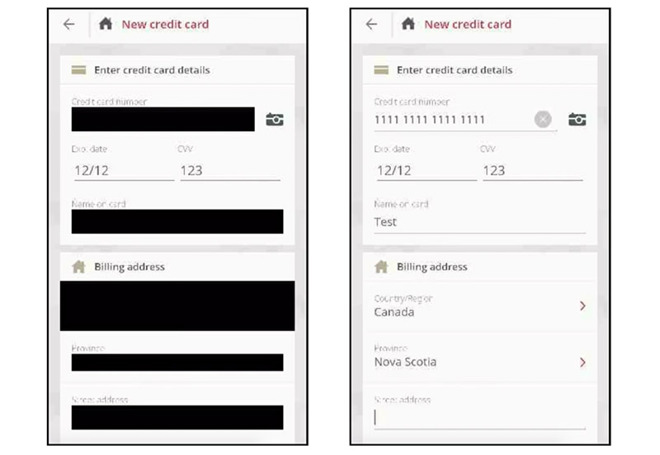

Field masking in Air Canada's iOS app is at times ephemeral. | Source: TechCrunch

According to an investigation conducted by TechCrunch, analytics firm Glassbox, and other companies like it, allow customers to embed session replay technology into their respective apps. These tools capture

screenshots and user interactions, including on-screen taps and in some cases keyboard entries, which are sent back to app developers or Glassbox servers for further examination.

Though not as polished as the video-enabled screen recording function built into iOS 12, session replay technology effectively screenshots an app's user interface at key moments to determine whether it is functioning as designed, the report said.

"Glassbox has a unique capability to reconstruct the mobile application view in a visual format, which is another view of analytics, Glassbox SDK can interact with our customers native app only and technically cannot break the boundary of the app," a Glassbox spokesperson told the publication. More specifically, when a keyboard overlay appears above the native app, "Glassbox does not have access to it."

Glassbox customers include big-name corporations like Abercrombie & Fitch and sister brand Hollister, Hotels.com, Expedia, Air Canada and Singapore Airlines.

While user monitoring is nothing new, and in many cases should be expected, mishandling of session replays can lead to leakage of sensitive information.

Citing a recent report from The App Analyst, TechCrunch notes Air Canada's app was found to be sending session replay data containing exposed passport and credit card numbers. This could be a problem, as some companies opt to send app data directly to Glassbox's cloud and not their own servers.

In its own study, which employed man-in-the-middle software to monitor data being sent from target apps, the publication discovered data transmitted to Glassbox was "mostly obfuscated," though some screenshots contained umasked email addresses and postal codes. Of the apps listed above, Abercrombie & Fitch, Hollister and Singapore Airlines passed session replay data on to Glassbox, while Hotels.com and Expedia siloed data on their own domains.

Further, none of the apps reviewed as part of the investigation make clear in their respective privacy policies that Glassbox technology is being employed to record users' screens.

In response to the TechCrunch report, Glassbox said it is a strong supporter of user privacy and provides customers with tools to obfuscate "every element" of personal data. The company believes that its customers should make users aware that their data is being recorded.

"Glassbox and its customers are not interested in "spying" on consumers. Our goals are to improve online customer experiences and to protect consumers from a compliance perspective," the company said in a statement provided to AppleInsider.

Glassbox went on to say that its platform is secure, encrypted and meets security and data privacy standards and regulations like SOC2 compliance and GDPR. No data is shared with third parties, the company said.

Still, as some Glassbox customers currently do not include mention of user monitoring in Apple-mandated disclosures, and with Glassbox itself lacking requirements of its own, end users are largely unaware that their actions are being so closely observed. More concerning, however, is that iOS app makers are in some cases funneling sensitive data to a third party without express user permission and absent of proper encryption protocols.

Updated with response from Glassbox

Field masking in Air Canada's iOS app is at times ephemeral. | Source: TechCrunch

According to an investigation conducted by TechCrunch, analytics firm Glassbox, and other companies like it, allow customers to embed session replay technology into their respective apps. These tools capture

screenshots and user interactions, including on-screen taps and in some cases keyboard entries, which are sent back to app developers or Glassbox servers for further examination.

Though not as polished as the video-enabled screen recording function built into iOS 12, session replay technology effectively screenshots an app's user interface at key moments to determine whether it is functioning as designed, the report said.

"Glassbox has a unique capability to reconstruct the mobile application view in a visual format, which is another view of analytics, Glassbox SDK can interact with our customers native app only and technically cannot break the boundary of the app," a Glassbox spokesperson told the publication. More specifically, when a keyboard overlay appears above the native app, "Glassbox does not have access to it."

Glassbox customers include big-name corporations like Abercrombie & Fitch and sister brand Hollister, Hotels.com, Expedia, Air Canada and Singapore Airlines.

While user monitoring is nothing new, and in many cases should be expected, mishandling of session replays can lead to leakage of sensitive information.

Citing a recent report from The App Analyst, TechCrunch notes Air Canada's app was found to be sending session replay data containing exposed passport and credit card numbers. This could be a problem, as some companies opt to send app data directly to Glassbox's cloud and not their own servers.

In its own study, which employed man-in-the-middle software to monitor data being sent from target apps, the publication discovered data transmitted to Glassbox was "mostly obfuscated," though some screenshots contained umasked email addresses and postal codes. Of the apps listed above, Abercrombie & Fitch, Hollister and Singapore Airlines passed session replay data on to Glassbox, while Hotels.com and Expedia siloed data on their own domains.

Further, none of the apps reviewed as part of the investigation make clear in their respective privacy policies that Glassbox technology is being employed to record users' screens.

In response to the TechCrunch report, Glassbox said it is a strong supporter of user privacy and provides customers with tools to obfuscate "every element" of personal data. The company believes that its customers should make users aware that their data is being recorded.

"Glassbox and its customers are not interested in "spying" on consumers. Our goals are to improve online customer experiences and to protect consumers from a compliance perspective," the company said in a statement provided to AppleInsider.

Glassbox went on to say that its platform is secure, encrypted and meets security and data privacy standards and regulations like SOC2 compliance and GDPR. No data is shared with third parties, the company said.

Still, as some Glassbox customers currently do not include mention of user monitoring in Apple-mandated disclosures, and with Glassbox itself lacking requirements of its own, end users are largely unaware that their actions are being so closely observed. More concerning, however, is that iOS app makers are in some cases funneling sensitive data to a third party without express user permission and absent of proper encryption protocols.

Updated with response from Glassbox

Comments

This sort of behavior should be clearly spelled out when someone installs the app. It’s not necessarily bad but a person should be able to make their own decision on whether to use an app that will be tracking and reporting their every on-screen action.

If I install an App with this SDK, it can ONLY take screen shots of itself. It has no ability to take screen shots when you switch to another App or of iOS itself (like your Settings).

And since everything you do in an App is already known the the App (like everything you type) they’re not getting any additional information.

The only real issue I see is if they send this data to someone else in a form that lets them see something they shouldn’t.

I don't have any third-party apps on my devices.

I think the App Store should have a Russian flag icon for all those apps designed in Russia and a Chinese flag for all those designed in China.

There needs to be a 'Good Housekeeping' seal of approval, where all apps that don't harvest, refine and sell your data get a little logo next to it!

I use DuckDuckGo (which Apple should buy). No Google, FaceBook or Twitter apps.

Apple should put out a Beta, FaceBook-like App, a YouTube-like App, Twitter-like app and just let them grow organically. And advertise/market the Privacy aspect of their offerings!

Arguably any instance of private information should not be transmitted to a 3rd party for any other purpose other than to conduct the requested service.

And how secure is this system? Oh yes, that's right, it's not, since even things like my PASSPORT data get bled to third-parties and their untrusted and insecure cloud infrastructures.

This is HUGE, even though it's confined to just the app in question!

Good job.

The system would have to perfect (doesn’t exist), the software developers trustworthy (which they aren’t), the hardware to be perfect (which it isn’t), for a closed system to make sense.

This is why Facebook and google’s marketing apps required side loading (to get around the “walled garden”) and were still able to be remotely disabled by cancelling the enterprise certificate.

Also your statement about ability to monitor traffic is also false, which should have been obvious to you as the article was able to be written in the first place.

As to the walled gardens comment that "you can’t monitor app activity, the data they send and receive, spyware, etc", I presume the writer didn't read the article. It clearly says "...study, which employed man-in-the-middle software to monitor data". For the avoidance of doubt, "man-in-the-middle" means inserting an entity into the communication path to monitor or otherwise interact with the data.

Apple's walled garden cannot be perfect (of course) but it has two big things going for it:

* It is continually improving as Apple blocks attempts at exploitation - individual exploits won't happen again. This makes future malpractice that bit harder.

* Its sole-provider status gives Apple a very big stick with which to beat transgressors. This in turn gives a big deterrent effect that reduces the number of misbehaving apps being created.

I don't see that sort of benefit for the apps running on Apple's biggest competitor.

How could an app be approved with so blatant a privacy violation. To monitor and report back on keyboard input and screen content!?!?

Except when it doesn't.