Siri may improve accuracy by mapping the room like a HomePod does

New research from Apple and Carnegie Mellon University delves into how smart devices could learn about their surroundings to better understand requests by knowing when and where they are being talked to.

Future HomePods could learn about their surroundings by listening, and asking users

Academics from Apple, and Carnegie Mellon University's Human-Computer Interaction Institute, have published a research paper describing how devices such as Siri and HomePod could be improved by having them listen to their surroundings. While many Apple devices listen, they are explicitly waiting to hear the phase "Hey, Siri," and anything else is ignored.

It's the same with Alexa, or at least it is in theory, but these researchers advocate having smart devices actively listen in order to determine details of their environment -- and what people are doing there.

"Listen Learner," they say in their paper, "[is] a technique for activity recognition that gradually learns events specific to a deployed environment while minimizing user burden."

Currently, HomePods automatically adjust their audio output to suit the environment and space that they are in. And Apple has filed patents that would see future HomePods using the position of people in a room to direct audio to them.

The idea behind this paper's research is that similar sensors could listen for sounds and detect where they are coming from. It could then group them so that, for instance, it recognizes what direction the bleeps from a microwave are coming. Understanding the context of where someone is standing and what noises are being heard from which directions, could make Siri better able to understand requests, or to volunteer information.

"For example, the system can ask a confirmatory query: 'was that a doorbell?', in which the user responds with a 'yes,'" it continues. "Once a label is established, the system can offer push notifications and other actions whenever the event happens again. This interaction links both physical and digital domains, enabling experiences that could be valuable for users who are e.g., hard of hearing."

While the paper repeatedly and exclusively mentions HomePods, it is really concerned with any device with microphones. It suggests that since we all now have an ever-increasing number of devices that are capable of listening, then we already have tools to improve voice control.

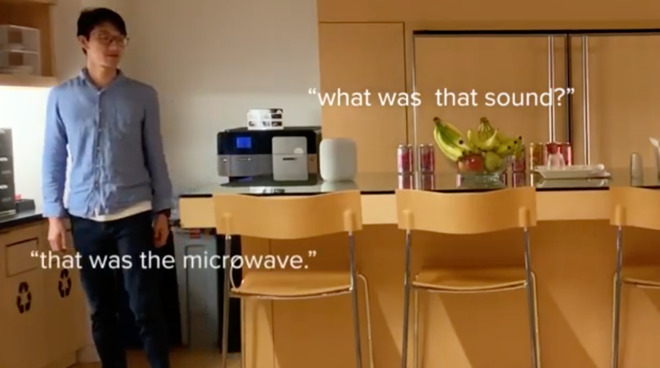

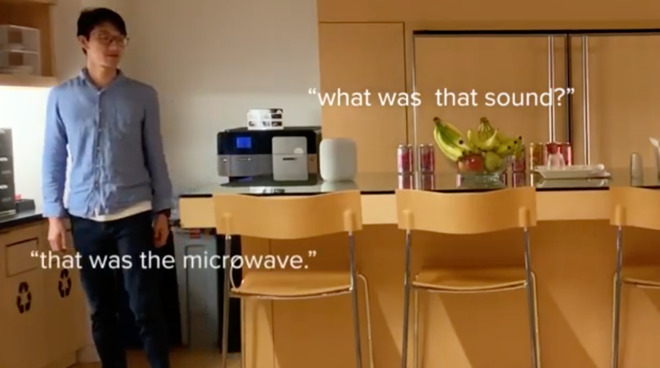

In a video accompanying the paper, the researchers demonstrate how listening like this can improve accuracy, and also how it's more successful than previous attempts to train devices.

The paper, "Automatic Class Discovery and One-Shot Interactions for Acoustic Activity Recognition," proposes that a device be able to listen continuously, although "no raw audio is saved to the device or to the cloud." It keeps doing this, effectively creating labels or tags that are triggered by certain sounds, until it's basically heard enough.

"Eventually, the system becomes confident that an emerging cluster of data is a unique sound, at which point, it prompts [the user] for a label the next time it occurs," explains the paper. "The system asks: 'what sound was that?', and [the user] responds with: 'that is my faucet.' As time goes on, the system can continue to intelligently prompt Lisa for labels, thus slowly building up a library of recognized events."

As well as a general "what sound was that?" kind of question, it might be able to guess and so try asking a more specific question. "The system might ask: 'was that a blender?'" says the paper. "In which [case the user] responds: 'no, that was my coffee machine.'"

While the paper is chiefly concerned with the effectiveness of a device asking the user questions like this, the researchers explain that they also tried specific use cases. "We built a smart speaker application that leverages Listen Learner to label acoustic events to aid accessibility in the home.," it says.

There is no indication of Apple or other firms integrating this idea into their smart speakers yet. Instead, this was a short-term focused test, and the team have recommendations for further research.

However, it's promising because they conclude that this test "provides accuracy levels suitable for common activity-recognition use cases," and brings "the vision of context-aware interactions closer to reality."

Keep up with all the Apple news with your iPhone, iPad, or Mac. Say, "Hey, Siri, play AppleInsider Daily," -- or bookmark this link -- and you'll get a fast update direct from the AppleInsider team.

Future HomePods could learn about their surroundings by listening, and asking users

Academics from Apple, and Carnegie Mellon University's Human-Computer Interaction Institute, have published a research paper describing how devices such as Siri and HomePod could be improved by having them listen to their surroundings. While many Apple devices listen, they are explicitly waiting to hear the phase "Hey, Siri," and anything else is ignored.

It's the same with Alexa, or at least it is in theory, but these researchers advocate having smart devices actively listen in order to determine details of their environment -- and what people are doing there.

"Listen Learner," they say in their paper, "[is] a technique for activity recognition that gradually learns events specific to a deployed environment while minimizing user burden."

Currently, HomePods automatically adjust their audio output to suit the environment and space that they are in. And Apple has filed patents that would see future HomePods using the position of people in a room to direct audio to them.

The idea behind this paper's research is that similar sensors could listen for sounds and detect where they are coming from. It could then group them so that, for instance, it recognizes what direction the bleeps from a microwave are coming. Understanding the context of where someone is standing and what noises are being heard from which directions, could make Siri better able to understand requests, or to volunteer information.

"For example, the system can ask a confirmatory query: 'was that a doorbell?', in which the user responds with a 'yes,'" it continues. "Once a label is established, the system can offer push notifications and other actions whenever the event happens again. This interaction links both physical and digital domains, enabling experiences that could be valuable for users who are e.g., hard of hearing."

While the paper repeatedly and exclusively mentions HomePods, it is really concerned with any device with microphones. It suggests that since we all now have an ever-increasing number of devices that are capable of listening, then we already have tools to improve voice control.

In a video accompanying the paper, the researchers demonstrate how listening like this can improve accuracy, and also how it's more successful than previous attempts to train devices.

The paper, "Automatic Class Discovery and One-Shot Interactions for Acoustic Activity Recognition," proposes that a device be able to listen continuously, although "no raw audio is saved to the device or to the cloud." It keeps doing this, effectively creating labels or tags that are triggered by certain sounds, until it's basically heard enough.

"Eventually, the system becomes confident that an emerging cluster of data is a unique sound, at which point, it prompts [the user] for a label the next time it occurs," explains the paper. "The system asks: 'what sound was that?', and [the user] responds with: 'that is my faucet.' As time goes on, the system can continue to intelligently prompt Lisa for labels, thus slowly building up a library of recognized events."

As well as a general "what sound was that?" kind of question, it might be able to guess and so try asking a more specific question. "The system might ask: 'was that a blender?'" says the paper. "In which [case the user] responds: 'no, that was my coffee machine.'"

While the paper is chiefly concerned with the effectiveness of a device asking the user questions like this, the researchers explain that they also tried specific use cases. "We built a smart speaker application that leverages Listen Learner to label acoustic events to aid accessibility in the home.," it says.

There is no indication of Apple or other firms integrating this idea into their smart speakers yet. Instead, this was a short-term focused test, and the team have recommendations for further research.

However, it's promising because they conclude that this test "provides accuracy levels suitable for common activity-recognition use cases," and brings "the vision of context-aware interactions closer to reality."

Keep up with all the Apple news with your iPhone, iPad, or Mac. Say, "Hey, Siri, play AppleInsider Daily," -- or bookmark this link -- and you'll get a fast update direct from the AppleInsider team.

Comments

For one thing, I'd set up a panel of volunteers who are told to act as if Siri can do anything and learn the heck out of what types of things people want from this. Hell, have a small army of humans acting as "virtual Siris" so the experience is realistic.

Here's a trivial example of something I experience with Siri the other day. At the end of a board game, there can be a lot of math to calculate scores so I tried Siri. It worked! But she is rather annoying.

me: "Hey Siri what is 15 plus 21 plus 4 plus 45 plus 16 plus 9?"

Siri: "15 plus 21 plus 4 plus 45 plus 16 plus 9 is 110"

Using the Star Trek standard I should be able to interrupt with "just the answer" and she would omit echoing my question and just say 110. And the next time I ask she would also skip to the answer.

I know, we'd have this conversation/debate 100 times already. I personally don't care is Siri is better than Alexa right now or not. The fact is this is like debating which pre-iPhone smart phone was the best. I'm sure Apple is working on this; I just hope they are really working on it.

That said, I have very few issues in my personal Siri usage and use it multiple times a day on my iPhone, Watch and HomePod, less frequently on Mac, iPad and TV. My biggest issue is using Siri on my Apple Watch frequently results in nothing happening, it just hangs or the Siri screen goes back to my Watch face without doing a thing.

The thing that baffles me the most about Siri is how different people can ask it the exact same question and get different results. A couple of years ago someone posted a screen shot here of what Siri provided as a response to who starred in a particular movie. The response was a fail in that it did not provide the answer. When I saw the post I immediately asked Siri the same question, reading it from the screen shot in front of me and Siri presented to me a list of the stars of the movie. What the?

/s

Apparently Apple invented nothing and HomePod is a knockoff of Alexa somehow.

Seriously though, it's a shame an argument even exists. The sad part is Apple already has technology that blows the Siri wannabes away but they haven't implemented it. Either because of technical issues or they're holding back for a special update/product, I do not know.

Another really annoying thing is it's impossible to talk to a particular Siri. Sometimes if my watch is on the top of my wrist and I ask Siri how long is left on a timer for example, watch Siri pipes up and says there are no timers, but then HomePod Siri stays silent despite a timer running. Either we need to be able to assign devices names, or it needs to be something like "Hey HomePod" or "Hey watch". When I had just a HP and an iPhone it wasn't an issue, but when I have HP, multiple iPhones, an Apple Watch and AirPods all active at once, it's pot luck which one will activate.

I know several people with an AW and they never really use Siri as it's so bad. I asked it to delete an email it just read the other day, and it said it wasn't "allowed" to do that. What?

While I'm talking about my wishlist, I'd also like to be able to do "offline" Siri questions for things that don't need an internet connection. "Whats a 15% tip on $23.87?" "What time is my next appointment?" "What is 35 Fahrenheit in Celsius?" "Set a timer." "Make a note." "Set a reminder." "Take dictation in notes." That's all stuff that does not need an internet connection. Asking "how long will it take to get to my next appointment" can give you a rough estimate, but it can't be exact without live traffic data. It can still give you a rough estimate of when you'll need to leave, though. These are all things that are constant, or only need the voices to text features of the phone. You're not asking it to look up something online. A Fahrenheit to celsius coversiton is never going to change. 212F ALWAYS equals 100C, no matter what. A 15% tip on $23.87 is ALWAYS $3.59. Setting a reminder, even if it is location based, can still be set, and it can look the location up later ("Remind me to stop at the store to get milk when I leave the office".) If you are at home, you should be able to control HomeKit stuff even without an Internet connection ("Set the thermostat to 68." "Turn on the tv"... etc). So that's been bugging me for years. That should TOTALLY be possible to do.

OK. Rant over.

The first is due to privacy, though Apple claims to have differential privacy which means they get the data they need to improve and it's supposedly anonymised.

Secondly I also hate that Siri requires a persistent internet connection. It's even more stupid that it just doesn't work at all on the watch when there's no connection. You can actually (or at least could a few iOS revisions ago) turn off Siri and use the old basic voice recognition for simple things like stopping and starting music - which doesn't use the Internet. That works reasonably well, and its certainly better than no Siri at all which is what you get when you have no connection.

The logic around the Siri interaction via Airpods pisses me off so much. Sometimes when asking Siri to change the volume via my Airpods, it thinks it may be able to process the request without ducking the playing audio to listen. But occasionally the time it takes to duck the audio takes so long that I assume it's not heard the "hey Siri" and I try again... only that it did actually hear and now I've just made a second request. And then in any case after the ducking, it sometimes (maybe 1/5 times) delays for 5 or 6 seconds and says "just a moment..." then 6 seconds later "please hold the line..." and after a further 6 seconds says "I'm having problems with the connection blah blah." Try again and its usually fine, but then this wouldn't be an issue if it could process things locally! It's *really* annoying and embarrassingly bad.

This. When my AW 4 was brand new, it was my most-responsive Siri device. Now 1.5 years in, it is...slow. Unresponsive. I'd like to know where & why it's falling down. Siri requests are processed on a cloud-based server, so I have to assume the newer OS is bogging down its ability to parse out voice queries to the point of being unresponsive. This is unacceptable.

Reminds of the story about Safari development inside Apple -- if your code check-in slows down Safari during unit testing, it's rejected. They need to do this with Siri.

If multiple devices are in-range of each other they communicate to each other to establish response hierarchy:

The device that responds is the one that heard you best or was recently raised or used.

https://support.apple.com/en-us/HT208472

...Sounds like it still needs improvement. I didn't know this caveat:

- If you have an iPhone or iPad, you can place it face down so that it doesn't respond to ”Hey Siri.”

I'd much prefer they improve it in an automated fashion rather than have to address each device separately.If you want to see what services and data types made use of it recently on your iDevice go to Settings>Privacy>Analytics>Analytics data> and look for lines that begin with "DifferentialPrivacy".