Future Apple devices will share and edit 3D AR images

Rather than passively display virtual Apple AR objects, "Apple Glass" may allow users to share 3D data, and manipulate it in editing apps.

Editing shared 3D AR objects

Apple's myriad patents and patent applications for Apple AR already include ones for displaying 3D data from other devices. Two new patents, however, show that Apple wants users to be able to selectively share or retrieve objects, and also edit them.

"Displaying 3D content shared from other devices," is a newly-revealed patent that proposes Head-Mounted Displays (HMD) could use "three dimensional (3D) content from a separate device."

Apple's patent is unusually blunt about current systems that try to do this. "Existing computing systems and applications do not adequately facilitate the sharing and use of 3D content to provide and use CGR environments on electronic devices," it says.

What Apple wants to see is a HMD able to display "3D content corresponding to received data objects in CGR environments," regardless of where they come from. Rather than every user seeing AR or VR versions of objects generated by their own hardware, they should be able to see what other people have made.

"For example, a first user, who is at home using a first device," says Apple, "can receive a data object corresponding to a couch from a second user who is in a retailer store looking at the couch."

"In this example, the second user uses a second device to create or identify a data object corresponding to the couch," it continues, "e.g., using a camera of the second device to create a file that includes a 3D model of the couch or identifying a file or data storage address of a file that includes a 3D model of the couch."

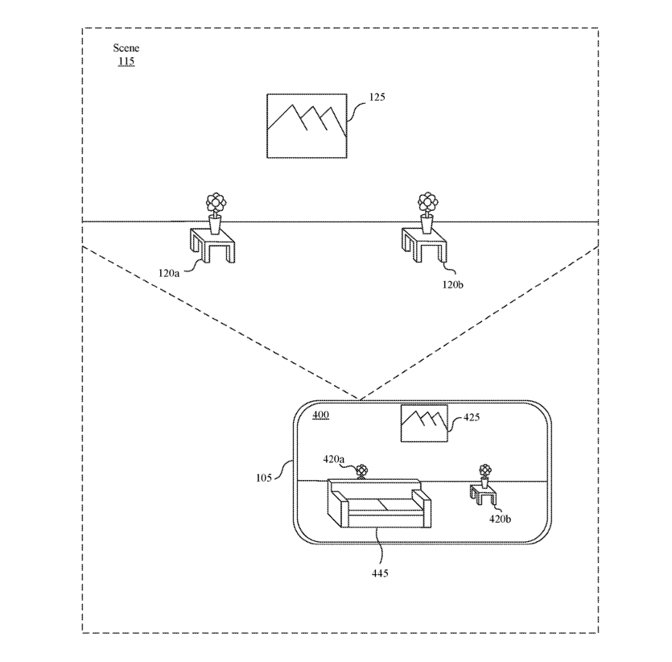

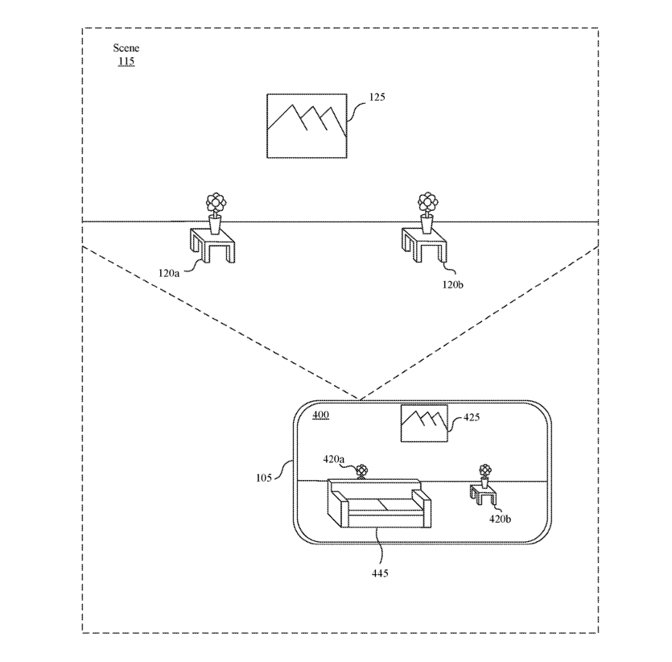

Detail from the patent showing (top) a real-world environment and (bottom) the same space with a shared 3D object in it

Just as you can already place furniture from Ikea's database into that company's AR app, so here a friend at the local store could send you a 3D version of the couch.

The patent is chiefly concerned with different ways of capturing and transmitting that object. It includes details about accompanying the object with text, such as an email message, identifying the source.

"[Then] the first user may reposition the couch relative to the real world tables in the captured images," says Apple, "and then physically move the device around the room to view the couch from different viewpoints within the room."

"Conventional 180 degree or 360 degree video and/or images are stored in flat storage formats using equirectangular or cubic projections to represent spherical space," explains Apple. "If these videos and/or images are edited in conventional editing or graphics applications, it is difficult for the user to interpret the experience of the final result when the video or images are distributed and presented in a dome projection, cubic, or mapped spherically within a virtual reality head-mounted display."

"Editing and manipulating such images in these flat projections requires special skill and much trial and error," continues the patent. "Further, it is not an uncommon experience to realize after manipulation of images or videos composited or edited with spherical that subsequent shots are misaligned, or stereoscopic parallax points do not match in a natural way."

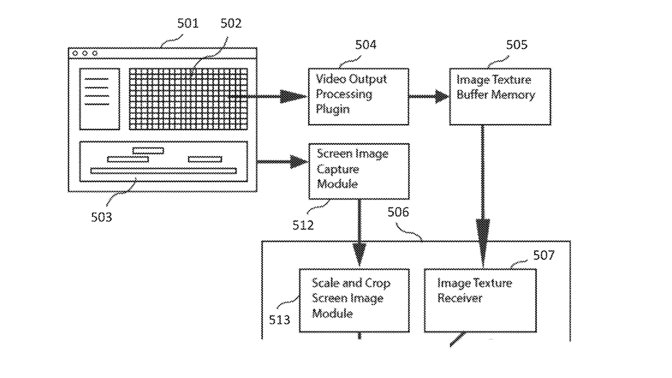

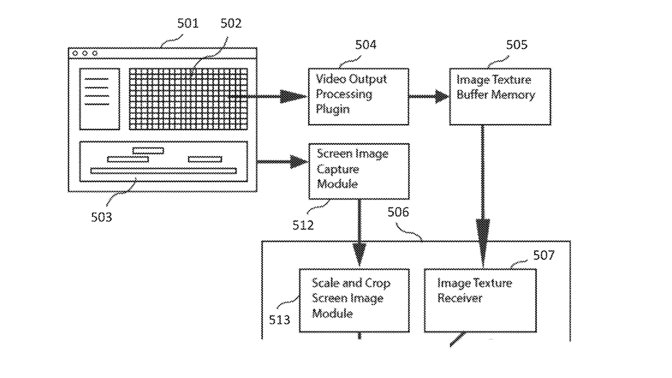

Detail from the patent showing part of the processing for correctly editing shared 3D objects

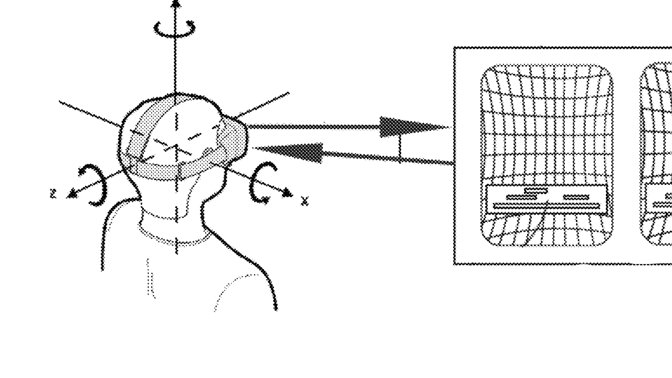

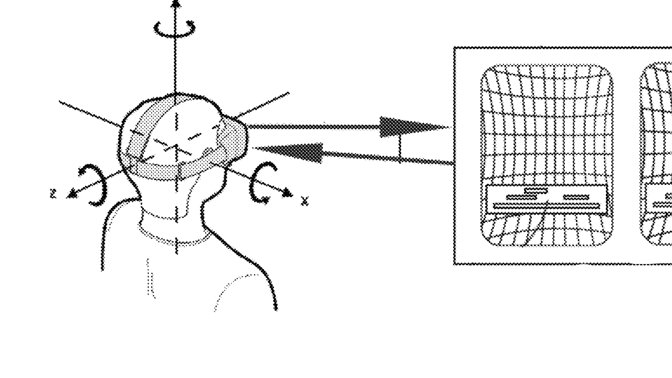

Apple's proposal is to "continuously acquire the orientation and position data" from a HMD and "simultaneously render a representative monoscopic or stereoscopic view of that orientation to the head mounted display, in real time."

So a user gets a 3D image, perhaps shared from another user, and the HMD alters its display to match the position of the wearer. It also dynamically modifies the image it's displaying "when the image data is modified by the media manipulation application."

The first patent is credited to five inventors, including Norman N. Wang. His previous related patents include one on "generating refined, high fidelity" 2D and 3D textures.

Timothy Dashwood, who invented the second patent, is also credited on a previous one regarding the stereoscopic rendering of virtual 3D objects.

Stay on top of all Apple news right from your HomePod. Say, "Hey, Siri, play AppleInsider," and you'll get latest AppleInsider Podcast. Or ask your HomePod mini for "AppleInsider Daily" instead and you'll hear a fast update direct from our news team. And, if you're interested in Apple-centric home automation, say "Hey, Siri, play HomeKit Insider," and you'll be listening to our newest specialized podcast in moments.

Editing shared 3D AR objects

Apple's myriad patents and patent applications for Apple AR already include ones for displaying 3D data from other devices. Two new patents, however, show that Apple wants users to be able to selectively share or retrieve objects, and also edit them.

"Displaying 3D content shared from other devices," is a newly-revealed patent that proposes Head-Mounted Displays (HMD) could use "three dimensional (3D) content from a separate device."

Apple's patent is unusually blunt about current systems that try to do this. "Existing computing systems and applications do not adequately facilitate the sharing and use of 3D content to provide and use CGR environments on electronic devices," it says.

What Apple wants to see is a HMD able to display "3D content corresponding to received data objects in CGR environments," regardless of where they come from. Rather than every user seeing AR or VR versions of objects generated by their own hardware, they should be able to see what other people have made.

"For example, a first user, who is at home using a first device," says Apple, "can receive a data object corresponding to a couch from a second user who is in a retailer store looking at the couch."

"In this example, the second user uses a second device to create or identify a data object corresponding to the couch," it continues, "e.g., using a camera of the second device to create a file that includes a 3D model of the couch or identifying a file or data storage address of a file that includes a 3D model of the couch."

Detail from the patent showing (top) a real-world environment and (bottom) the same space with a shared 3D object in it

Just as you can already place furniture from Ikea's database into that company's AR app, so here a friend at the local store could send you a 3D version of the couch.

The patent is chiefly concerned with different ways of capturing and transmitting that object. It includes details about accompanying the object with text, such as an email message, identifying the source.

"[Then] the first user may reposition the couch relative to the real world tables in the captured images," says Apple, "and then physically move the device around the room to view the couch from different viewpoints within the room."

Manipulating shared 3D objects

"Method and system for 360 degree head-mounted display monitoring between software program modules using video or image texture sharing," is a related second new patent. This one is about how users can receive 3D information in a file, and then correctly manipulate it."Conventional 180 degree or 360 degree video and/or images are stored in flat storage formats using equirectangular or cubic projections to represent spherical space," explains Apple. "If these videos and/or images are edited in conventional editing or graphics applications, it is difficult for the user to interpret the experience of the final result when the video or images are distributed and presented in a dome projection, cubic, or mapped spherically within a virtual reality head-mounted display."

"Editing and manipulating such images in these flat projections requires special skill and much trial and error," continues the patent. "Further, it is not an uncommon experience to realize after manipulation of images or videos composited or edited with spherical that subsequent shots are misaligned, or stereoscopic parallax points do not match in a natural way."

Detail from the patent showing part of the processing for correctly editing shared 3D objects

Apple's proposal is to "continuously acquire the orientation and position data" from a HMD and "simultaneously render a representative monoscopic or stereoscopic view of that orientation to the head mounted display, in real time."

So a user gets a 3D image, perhaps shared from another user, and the HMD alters its display to match the position of the wearer. It also dynamically modifies the image it's displaying "when the image data is modified by the media manipulation application."

The first patent is credited to five inventors, including Norman N. Wang. His previous related patents include one on "generating refined, high fidelity" 2D and 3D textures.

Timothy Dashwood, who invented the second patent, is also credited on a previous one regarding the stereoscopic rendering of virtual 3D objects.

Stay on top of all Apple news right from your HomePod. Say, "Hey, Siri, play AppleInsider," and you'll get latest AppleInsider Podcast. Or ask your HomePod mini for "AppleInsider Daily" instead and you'll hear a fast update direct from our news team. And, if you're interested in Apple-centric home automation, say "Hey, Siri, play HomeKit Insider," and you'll be listening to our newest specialized podcast in moments.