Thousands of apps violate US child privacy law

A report has taken a look at Apple's and Google's app stores to find apps directed at kids and their privacy policies, and many apps found don't come close to compliance with a US child privacy law.

Children's apps and privacy

The United States passed the Children's Online Privacy Protection Act (COPPA) in 1998. It aims to protect children's privacy online for those under 13 years of age.

App Stores must abide by the law, including Apple and Google. Researchers at Pixalate examined how each company's app store protected children's privacy during quarter three of 2022.

The research by Pixalate had several main take-aways about child privacy on the App Stores, and in apps.

Sixty-four percent of likely child-directed apps have no country of registration identified, and only 9% are registered in the US.

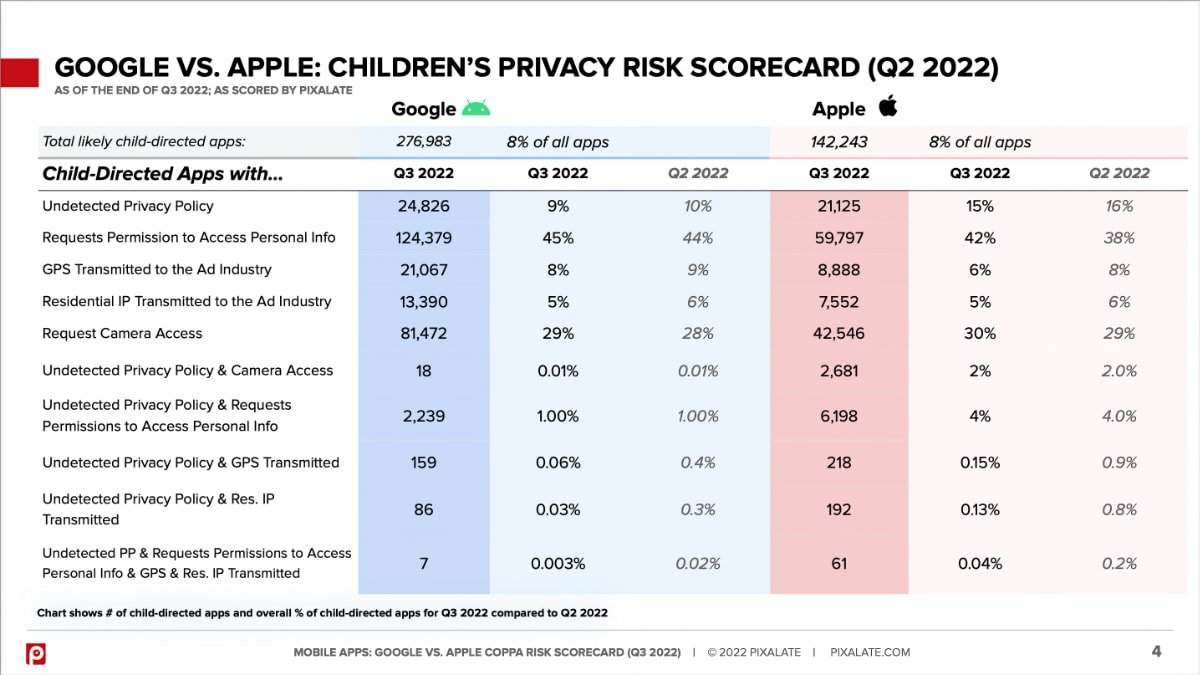

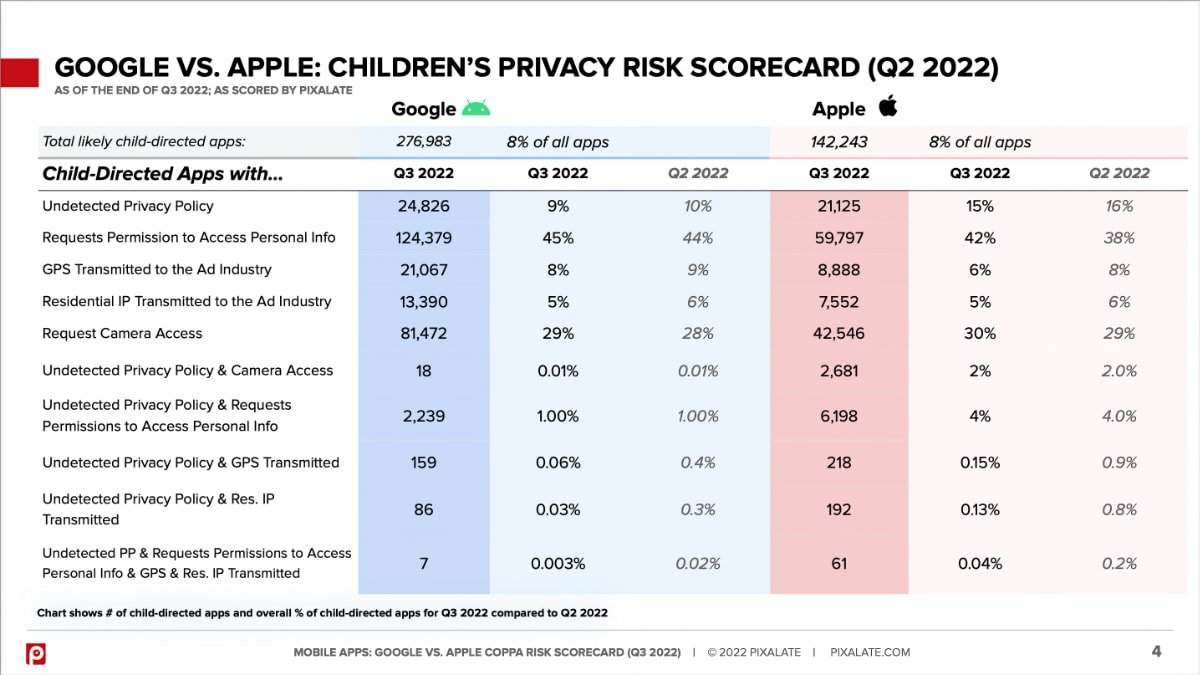

Each app store has performed better in some categories and worse in others. For example, in quarter three of 2022, 192 likely child-directed apps in the App Store had an undetected privacy policy and transmitted IP addresses, while only 86 apps did the same in the Play Store.

For apps that didn't transmit IPs but still had an undetected privacy policy, 15% were found on the App Store, or 21,125 apps, about the same as quarter two. The Play Store had 24,826 of those apps.

How each company's app stores rate for children's privacy

In the Play Store, 124,379 apps requested permission to access personal info, while 59,797 did the same on Apple's platform. Over all, 44% of all likely child-directed mobile apps request permissions to access personal data, an increase from 42% quarter-over-quarter.

In quarter three, advertisers spent three times more per app on likely child-directed apps than general audience apps. About 59% of likely child-directed apps share GPS and IP addresses with third-party digital advertisers compared to 43% of likely non-child-directed apps.

Eighty-one percent of the top 1,000 most popular likely child-directed apps in the Play Store transmit location or IP data in the ad bid stream.

Apple is doing better across most categories than Google, but improvements can be made, especially as Apple has increased advertising in the App Store -- to some detriment.

The company also has some privacy features on its platforms, including App Tracking Transparency. Introduced in iOS 14.5, to significantly reduce how much user data is tracked by other companies for app marketing purposes.

Read on AppleInsider

Children's apps and privacy

The United States passed the Children's Online Privacy Protection Act (COPPA) in 1998. It aims to protect children's privacy online for those under 13 years of age.

App Stores must abide by the law, including Apple and Google. Researchers at Pixalate examined how each company's app store protected children's privacy during quarter three of 2022.

The research by Pixalate had several main take-aways about child privacy on the App Stores, and in apps.

Examining the results

Pixalate found about 420,000 likely child-directed mobile apps across Google and Apple stores as of quarter three, a 1% decrease quarter-over-quarter. Of that, about 8% of apps in both stores are likely child-directed.Sixty-four percent of likely child-directed apps have no country of registration identified, and only 9% are registered in the US.

Each app store has performed better in some categories and worse in others. For example, in quarter three of 2022, 192 likely child-directed apps in the App Store had an undetected privacy policy and transmitted IP addresses, while only 86 apps did the same in the Play Store.

For apps that didn't transmit IPs but still had an undetected privacy policy, 15% were found on the App Store, or 21,125 apps, about the same as quarter two. The Play Store had 24,826 of those apps.

How each company's app stores rate for children's privacy

In the Play Store, 124,379 apps requested permission to access personal info, while 59,797 did the same on Apple's platform. Over all, 44% of all likely child-directed mobile apps request permissions to access personal data, an increase from 42% quarter-over-quarter.

In quarter three, advertisers spent three times more per app on likely child-directed apps than general audience apps. About 59% of likely child-directed apps share GPS and IP addresses with third-party digital advertisers compared to 43% of likely non-child-directed apps.

Eighty-one percent of the top 1,000 most popular likely child-directed apps in the Play Store transmit location or IP data in the ad bid stream.

Apple is doing better across most categories than Google, but improvements can be made, especially as Apple has increased advertising in the App Store -- to some detriment.

The company also has some privacy features on its platforms, including App Tracking Transparency. Introduced in iOS 14.5, to significantly reduce how much user data is tracked by other companies for app marketing purposes.

Read on AppleInsider

Comments

Yep, it'll take years for an app to be released. Checking each character of the code can be time consuming.

I'd guess that most of what Apple has to do to reduce their own risk as a marketplace can be automated fairly easily, but enforcement will remain problematic. If a developer claims to be from a particular country it's not Apple's job to prove or disprove that - verification of documentation won't need to be onerous.