Apple prepares HomeKit architecture rollout redo in iOS 16.3 beta

After halting its rollout of HomeKit's new architecture in iOS 16.2, Apple has resumed testing of the platform, with it resurfacing in the iOS 16.3 beta.

In December, Apple withdrew the option to upgrade Homekit to the new architecture, following reports the update wasn't working properly for users. It now seems that Apple is preparing to try it all again for the next set of operating system updates.

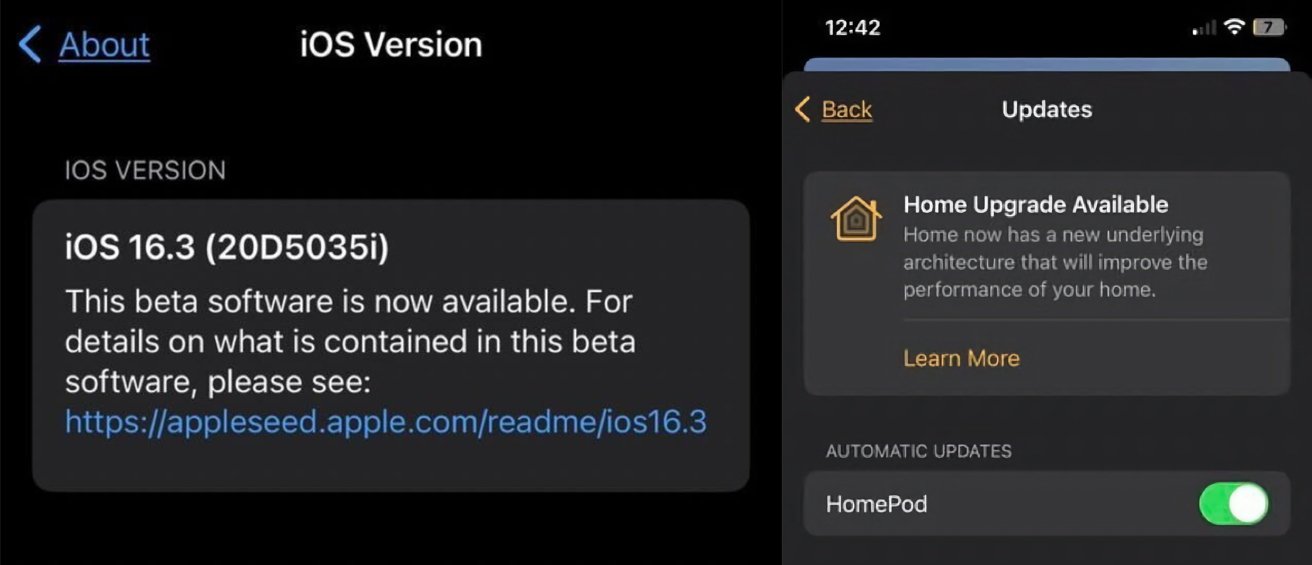

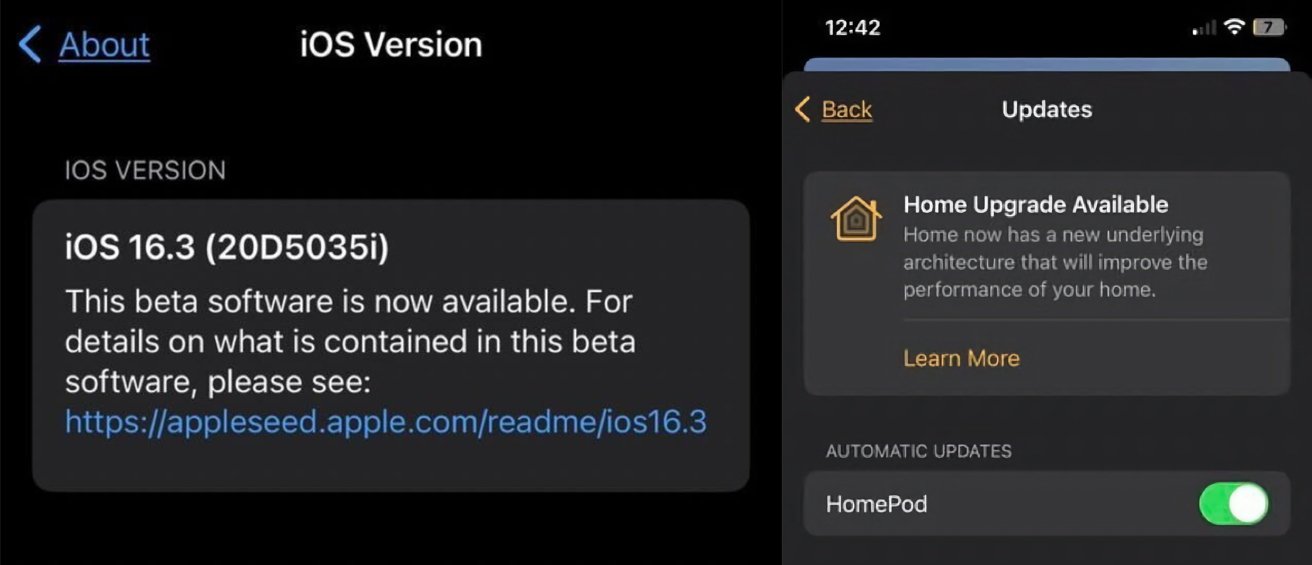

Screenshots from the iOS 16.3 beta show there is a message in the Home app confirming there is a "Home Upgrade Available," with a "new underlying architecture that will improve the performance of your home." This is the same update message that appeared in iOS 16.2 before being pulled.

Screenshots sent in by Anthony Powell

The inclusion of the notification in the beta is a strong indication that Apple believes all is fine with the update, and will be trying to release it to the public once again.

For the previous attempt, users reported seeing devices stuck in an "updating" mode after the upgrade completed, with some seeing devices unresponsive or failing to update fully. At the time, it was unclear what had caused the problems as there wasn't any spottable commonalities between accounts of the issue.

With the appearance in the iOS 16.3 beta, it seems Apple is confident it's worked out the problems and is willing to give it a second try.

Read on AppleInsider

In December, Apple withdrew the option to upgrade Homekit to the new architecture, following reports the update wasn't working properly for users. It now seems that Apple is preparing to try it all again for the next set of operating system updates.

Screenshots from the iOS 16.3 beta show there is a message in the Home app confirming there is a "Home Upgrade Available," with a "new underlying architecture that will improve the performance of your home." This is the same update message that appeared in iOS 16.2 before being pulled.

Screenshots sent in by Anthony Powell

The inclusion of the notification in the beta is a strong indication that Apple believes all is fine with the update, and will be trying to release it to the public once again.

For the previous attempt, users reported seeing devices stuck in an "updating" mode after the upgrade completed, with some seeing devices unresponsive or failing to update fully. At the time, it was unclear what had caused the problems as there wasn't any spottable commonalities between accounts of the issue.

With the appearance in the iOS 16.3 beta, it seems Apple is confident it's worked out the problems and is willing to give it a second try.

Read on AppleInsider

Comments

I can't downgrade my home even if I reset everything, because despite the upgrade being cancelled new homes still use the new architecture. And besides that resetting doesn't work properly anymore either, even with the special home it reset profile. It's a mess.

That said, the upgrade seemed to improve the responsiveness and reliability of homekit. I'm sure they could have used the homekit hubs as a bridge between old and new versions - though less incentive to upgrade of course.

https://www.reddit.com/r/HomeKit/comments/10bm6ba/cant_use_ipad_with_162_running_as_home_hub_after/

I dunno about this part. I'm a fan of agile software development -- it came into being exactly for the reason of "building the right thing & building the thing right". It's specifically for dealing with high amounts of complexity & uncertainty -- challenges that made plan-based waterfall projects very difficult and ripe for failure with modern, complex software and shifting requirements.

That being said, many engineering teams think they know agile development, without actually knowing agile development -- lacking in training and true understanding of the concepts & practices. This is to their detriment.