Not all new Apple Intelligence features will be available in the fall

Although iOS 18 will be arriving in the fall as usual, many Apple Intelligence features are on a slower rollout schedule. Here's what to expect, and when.

Craig Federighi rolled out Apple Intelligence

Users should expect only a handful of the many features unveiled as part of Apple Intelligence to be available when the new updates arrive. Even those that are available on release day will be noted as "previews," with further features arriving late in 2024 or as late as early 2025.

Bloomberg reported on Sunday that many features of Apple Intelligence won't even be available to developers until later this summer. As noted during the WWDC keynote, even those features will be limited to US English at first.

What Apple Intelligence features are arriving in 2024

To be clear, a number of the main AI features highlighted in the WWDC presentation are likely to be available in the initial OS updates this fall. This would include prioritizing notifications, and the ability to summarize long texts, emails, and webpages.

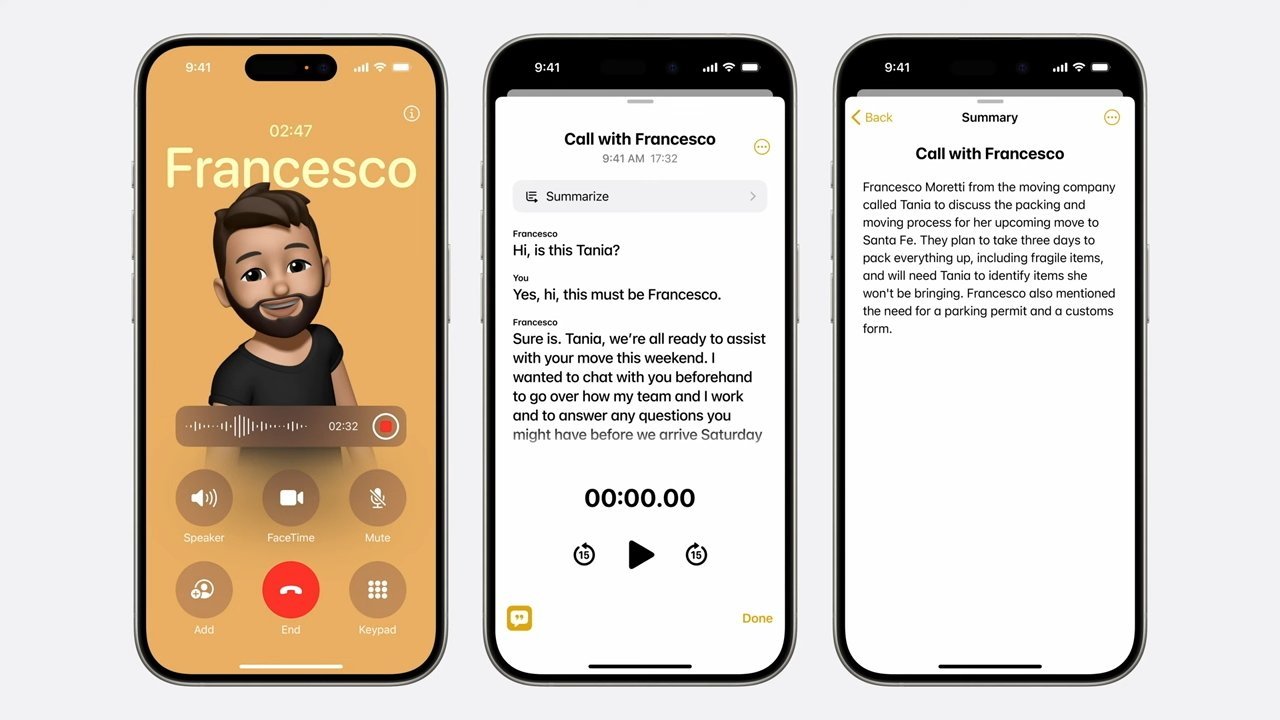

Also scheduled to be available as of the official release will be the Genmoji image generation and Grammerly-like writing improvement tools. Transcription capabilities already seen in the Podcasts app now will spread to other voice recordings, and voicemail transcription will be improved.

Wider transcription abilities should be arriving with iOS 18's initial release this fall.

In addition, the ability to calculate math problems written out in Notes and elsewhere should also be available as of launch. Again, while these features will be present in the official first releases of iOS and iPadOS 18 and macOS Sequoia, they will still be labelled as "previews."

Following the main release, some features teased during the keynote will arrive later in 2024. This includes a Mail app redesign that will automatically group emails by type.

Some additional features in the Home app, the ability to edit spatial video, and some expanded features coming to the Apple Vision Pro are likely to be pushed back until the .1 or .2 updates. Swift Assist, a code-writing feature for developers, will also be rolled out later.

Typically, the initial release of major system updates start in September, ahead of new hardware launches. The first updates to new OS versions tend to arrive later in the year.

What Apple Intelligence features are arriving in 2025

Given that iOS 18 and the related upgrades are introducing many new features, it makes sense for the company to parse out the arrival of the biggest new featues. It allows the company to add more languages as quickly as possible, while avoiding focusing too many engineering resources to any one area.

Apple is still building out its new Private Compute Cloud facilities, which is a massive server undertaking. It's partnership with OpenAI and potential further partners, coming later this year, will assist with queries from users already comfortable with chatbots.

Limiting the scope of such features will allow for fast fixes to any incorrect information, sometimes called "hallucinations," something Apple is very keen to minimize.

One of the biggest features that is likely to be delayed until early 2025 is the revamp of Siri, the voice assistant. Some of the advanced "personal context" features Apple highlighted in its presentation will not be fully present in the initial OS launches this fall.

Not all of Siri's promised revamp and new abilities will be available at first.

Contextual answers to questions like "when is my mom's flight landing" or asking it to optimize a photo and send it in a message will have to wait. That's not to say that Siri won't feature the new interface seen in the presentation, or better understand users' queries right from day one of the new OS versions.

Also available in the near future will be an enhanced "type to Siri" option for those who don't wish to speak or cannot speak clearly to Siri.

In the meantime, Apple is still considering partnerships with other AI providers to help bridge the delays while its own new features roll out. The company is said to still be working on potential deals with Google and Anthropic, for example, and has reached out to Baidu and Alibaba for potential chatbot availability in China.

Rumor Score: Likely

Read on AppleInsider

Comments

These OS ideas are pretty much universal and different implementations come to market. Let's take a trip back in time. Yes, the article is over seven years old:

https://www.forbes.com/sites/bensin/2017/03/15/honor-magic-hands-on-huaweis-face-recognizing-a-i-powered-concept-phone/

The competition still to this day doesn’t have such sophisticated facial recognition and started using dedicated AI chips years after Apple.

The 'concept' angle wasn't that it was some kind of phone for the future that never got released. The concept was of using AI to tie into a user's life/phone experience through the phone.

Those concepts were rolled out across lots of Huawei/Honor phones from then on.

You are incorrect about facial recognition on the competition. Huawei's has always been far ahead of Apple's.

Apple was just plain lazy with its depth sensing capabilities. It was also lazy with its ML efforts back then which were largely restricted to imaging (and slow).

Huawei brought a raft of depth sensing advances to market. Firstly, it made the 'notch' physically smaller. Landscape unlock (It took Apple years to implement that). 3D small object modeling/animation. 'Eyes On' AOD. Privacy enhancements. Smart rotate (locking rotation to your eyes instead of the device). AI Private View.

All while offering an underscreen fingerprint scanner too. Perfect for the pandemic.

Technically, Huawei was first to bring a neural processing unit to mainstream phones. The 'years after Apple' leaves me a little perplexed. That's why Huawei's AI infused 'Night Mode' was so far ahead of Apple's (well, Apple's was non-existent to be honest) and could take longer hand held exposures using AIIS.