Nvidia using Apple Vision Pro to control humanoid robots

A new control service from Nvidia can allow developers to work on projects involving humanoid robotics, controlled and monitored using an Apple Vision Pro.

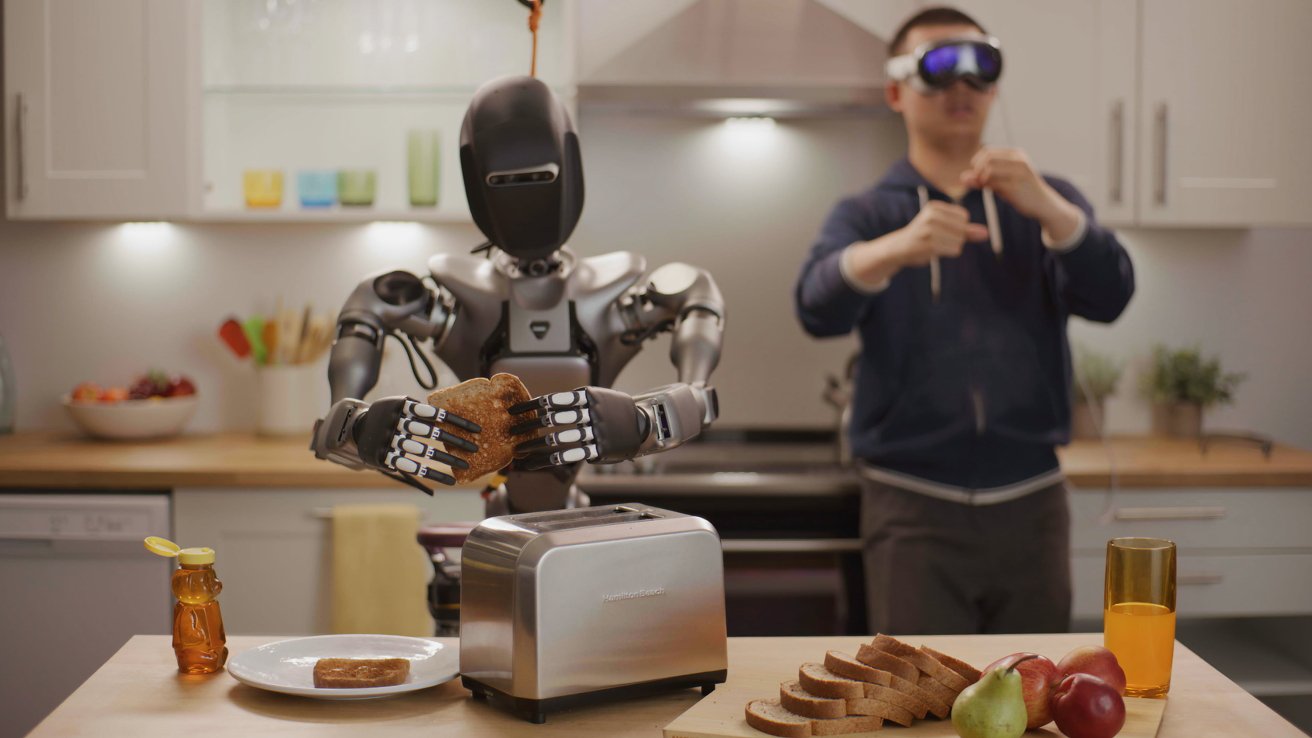

A robot being controlled by an Apple Vision Pro [Nvidia]

Developing humanoid robots has many challenges, with one being the nature of controlling the highly technical devices. To help in this area, Nvidia has made available a number of tools for robotic simulation, including some assisting in control.

Provided by Nvidia to major robot manufacturers and software developers, the suite of models and platforms are meant to help train a new generation of humanoid robots.

The collection of tools includes what Nvidia refers to as NIM microservices and frameworks, intended for simulation and learning. There's also the Nvidia OSMO orchestration service for dealing with multi-stage robotics workloads, as well as AI and simulation-enabled teleoperation workflows.

As part of these workflows, headsets and spatial computing devices like the Apple Vision Pro can be employed to not only see data, but to control hardware.

"The next wave of AI is robotics and one of the most exciting developments is humanoid robots," said Nvidia CEO and founder Jensen Huang. "We're advancing the entire NVIDIA robotics stack, opening access for worldwide humanoid developers and companies to use the platforms, acceleration libraries and AI models best suited for their needs."

Apple Vision Pro control

The NIM microservices are prebuilt containers that use Nvidia's inference software, meant to reduce deployment times. Two of these microservices are designed to help developers with simulation workflows for generative physical AI within the Nvidia Isaac SIM, a reference application.

One, the MimicGen NIM microservice, is basically used to help users control hardware using the Apple Vision Pro, or another spatial computing device. It generates synthetic motion data for the robot based on "recorded teleoperated data," namely translating movements from the Apple Vision Pro into movements for the robot to make.

Videos and images show that this is more than just moving a camera based on the headset's movements. It is shown that hand motions and gestures are also recorded and used, based on the Apple Vision Pro's sensors.

In effect, users could watch the robot's movements and directly control hands and arms, all using the Apple Vision Pro.

While such humanoid robots could try to mimic the gestures exactly, systems like Nvidias could infer what the user wants to do instead. Since users don't have tactile feedback for what the robot is holding, it could be too dangerous to directly mimic hand movements.

Another teleoperation workflow demonstrated at Siggraph also allowed developers to create large amounts of motion and perception data. All created from a small number of remotely captured demonstrations by a human.

For these demonstrations, an Apple Vision Pro was used to capture the movements of a person's hands. These were then used to simulate recordings using the MimicGen NIM microservice and the Nvidia Isaac Sim, which generated synthetic datasets.

Developers were then able to train a Project Groot humanoid model with a combination of real and synthetic data. This process is thought to help cut down on costs and time spent creating the data in the first place.

"Developing humanoid robots is extremely complex -- requiring an incredible amount of real data, tediously captured from the real world," according to robotics platform maker Fourier's CEO Alex Gu. "NVIDIA's new simulation and generative AI developer tools will help bootstrap and accelerate our model development workflows."

The microservices, as well as a access to models, the OSMO managed robotics service, and other frameworks, are all offered under the Nvidia Humanoid Robot Developer Program. Access is provided by the company to humanoid software, hardware, or robot manufacturers only.

Read on AppleInsider

Comments

I thought the AVP was the ONLY spatial computing device?