Apple Intelligence & Private Cloud Compute are Apple's answer to generative AI

Following months of rumors, Apple Intelligence has finally arrived, and promises to provide users more than just generative images.

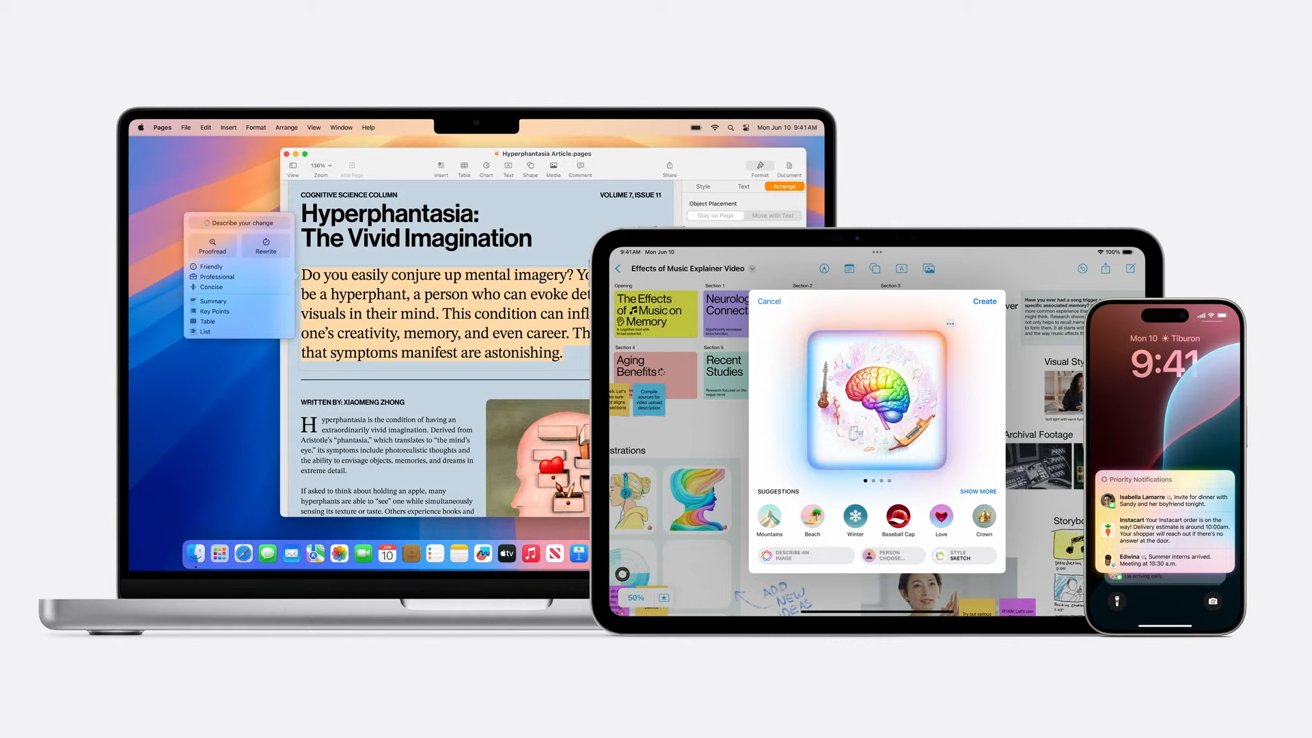

Apple Intelligence is a cross-platform AI initiative

Towards the end of Apple's keynote for WWDC 2024, following its main operating system announcements, the company moved on to the main event. It discussed its often-rumored push into machine learning.

Named Apple Intelligence, the new addition is all about using large language models (LLMs) to handle tasks involving text, images, and in-app actions.

For a start, the system is able to summarize key notifications, showing users the most important items in a summary, based on context. Key details are also surfaced to the user for each notification, while only the most important are shown under the new Reduce Interruptions Focus.

Apple Intelligence text options in Mail on iPadOS 18

System-wide writing tools can write, proofread, and summarize text for users. While this sounds like it could just be for messages and short text, it can also be used on longer stretches, such as blog posts.

The Writing Tools' Rewrite feature provide multiple versions of text for users to choose from, adjusting what the user has already written. This can vary in tone, depending on the audience the user's writing for.

This is available automatically across built-in apps and third-party apps.

Images

Apple Intelligence can also create images, again for many built-in apps. This includes personalizable for conversations with specific contacts in Messages, for example.

These images are created in three styles: Sketch, Animation, and Realism.

In apps like Messages, the Image Playground lets users create images quickly by selecting concepts like themes, costumes, and places, or by typing a description. Images can also be used from a user's photo library to add to the composition.

This can be performed within Messages itself, or through the dedicated Image Playground app.

Image Playground in iPadOS

In Notes, Image Playground can be accessed using a new Image Wand tool. This can also create images, using elements of the current notes page to work out what the most appropriate image to add could be.

The image generation even extends to emoji, with Genmoji allowing users to create their own custom icons. Using descriptions, a Genmoji can be created and inserted inline into messages.

A more conventional addition is in Photos, with Artificial Intelligence able to use natural language to search for specific photos or video clips. A new Clean Up tool will remove distracting objects from a photo's background.

Actions and Context

It can also perform actions within apps on behalf of the user. For example, it could open Photos and show images of specific groups of people from a request.

Apple Intelligence will support a wide array of apps

Apple also says Apple Intelligence is grounded on the context of a request within a user's data. For example, it could work out who family members are in relation to the user, and how meetings can overlap or clash.

One example of a complex query that can trigger actions in an app is "Play that podcast that Jamie recommended." Apple Intelligence will determine the episode by checking all of the user's conversations for the reference, and then open the Podcasts app to that specific episode.

In Mail, Priority messages can show the most urgent emails at the top of the list, as selected by Apple Intelligence. This includes summaries of each email, to give users more of a clue of its contents and why it was selected.

Apple Intelligence in Notes

Notes users can record, transcribe, and summarize audio thanks to Apple Intelligence. If a call is recorded, a summary transcript can be created at the end of the call, with all call participants informed of the recording automatically when it commences.

Naturally, Siri has been considerably upgraded with Apple Intelligence, including being able to understand users in specific contexts.

As part of this, Siri can also refer queries to ChatGPT.

Private Cloud Compute

A lot of this is based on on-device processing for security and privacy. The A17 Pro chip in the iPhone 15 Pro line is said to be powerful enough to handle this level of processing.

Many models are running on-device, but sometimes the processing requires in-cloud processing. This could be a security issue, but Apple's method is different.

Private Cloud Compute allows Apple Intelligence to work in the cloud, while preserving security and privacy. Models are run on servers running Apple Silicon, using security aspects of Swift.

Processes on-device work out if the request is sent to cloud servers or if it can be handled locally.

Apple insists the servers are secure, that they don't store user data, and use cryptographic elements to maintain security. This includes the devices never talking to a server unless that server has been publicly logged for inspection by independent experts.

Not for all users

While Apple Intelligence will be beneficial to many users, the requirements will freeze out many from enjoying it.

On iPhone, the requirement for an A17 Pro chip means only iPhone 15 Pro and Pro Max users can try it out in beta. Similarly, iPads with M-series chips and Macs running Apple Silicon can use it too.

It will be available in beta "this fall," in U.S. English only.

Read on AppleInsider

Comments

https://www.macrumors.com/2024/05/09/apple-to-power-ai-features-with-m2-ultra-servers/

https://www.msn.com/en-us/news/technology/apple-intelligence-announced-at-wwdc-2024-apple-joins-the-ai-race/ar-BB1nY1q2

For sure they cannot offer what Apple is doing.

Also interesting how they played down the Apple data center chips.

I'm not really sure what you would expect Apple to talk about concerning their datacenter silicon. They aren't the company that will list specs on their server configurations. And they won't be selling these chips, they are for their own exclusive use. For many years I have repeatedly speculated that Apple was testing Apple Silicon in their own data centers.

In the same way, they don't describe what espresso machines are being used in the cafes at the Infinite Loop campus or what sort of HVAC units or networking cable brand is at the spaceship Apple Campus. And when you go to a pizzeria, you don't know what brand and model number the industrial grade mixer used to make the pizza dough. Or the sewing machine used to make your jeans.

The most important thing is that the data is private and the system architecture can be inspected by third-party observers. The model number on the chip isn't really relevant to anyone but a fraction of Apple staff members.

Here's what I wrote in a separate thread: "Remember that almost everything Apple's devices can be disabled. Location Services, Notifications, Siri, iCloud services, Face ID, Touch ID, Apple Pay, microphone access, photo library access, camera access, music library access, Bluetooth, Wifi, whatever. You can basically run your iPhone like an iPod circa 2008 if you want."

Hell, you can turn off spell checking and auto punctuation if you want for a pristine GIGO experience.

If you have used Apple devices for more than a month and watched the keynote presentation with a modicum of attention, you will have noticed that these are ALL optional actions. If you just want to write an e-mail and hit send, you are still free to do so. You are free to type out your grocery list on Notes, draw stick figure people, make spelling errors, etc.

I wonder if it's a bunch of M2 Ultras, and I wonder if there's a custom server form factor...

And a year from now, Apple will announce new features some of which will only run on the iPhone 17 generation. If you have owned any Apple hardware devices more than a year, you should know this.

Believe me I will be disabling as much of the AI S*** as I can as fast as I can.

Trust me, I know. I have an iPhone 12 mini. I know when I upgrade my handset, I will lose the SIM card slot.

If you buy an iPhone 14 a week before iOS 18 comes out, you are pretty much guaranteed an iPhone that will have no AI features for the lifetime of that unit which should easily carry you into 2030. Even if you install iOS 18, Apple's hardware requirements state an iPhone 15 or later for a lot of this AI stuff. And based on what Apple has done in the past, you can be blissfully assured that they won't backport any of it earlier devices.

Hell, you can buy an iPhone 15 today and stay on iOS 17 forever. Apple will not point a gun at your head and say you might upgrade to iOS 18.

As for disabling all of the AI carp, be our guest. I used Siri a couple of times when it was still a third party app and a few more times after it launched as an Apple service then I disabled it. How long ago was that? Twelve years? I don't use iMessage either. So yeah, go ahead and disable as much as you'd like. Hell, you can put your iPhone into airplane mode and just pretend it's an iPod.

One thing I know I will be doing is delaying my software upgrade. I just upgraded my iDevices to iOS 17 and Mac to macOS Sonoma. A week ago. Most likely I will wait until a week before WWDC 2025 before upgrading to iOS 18 and macOS Sequoia. This ensures a user experience with fewer OS bugs because Apple's software QA went down the toilet about 7-8 years ago.

You'll probably disable less than you think. No, if you're a professional writer, you don't want AI gunking up your mojo. That said, there are a lot of features on your iPhone that you use just like any other end-user, and you'll probably find the convenience of a pocket assistant useful. Even with the written word, not everything is a creative writing project. There are no doubt any number of mundane business communications that if you employed a personal assistant, with a few instructions for what you want, you'd hand that stuff off to them to write. Now you'll be able to do the same with the PA in your pocket.

Apple will not backport beta-tested iPhone 15 Pro features to older devices. If a feature is meant to be available on a particular device, the functionality will be available to test during the iOS beta period on those devices. That's what the beta is for: for people (developers mostly) to test. Remember that there are far more iPhone 13 out in the world than the number of iPhone 13 devices in Apple's various campuses around the world.

It's not like Apple will say "This feature ran great on the iPhone 15 Pro. Let's release it to a bunch of older devices and cross our fingers." Some other companies might do that but not Apple. They aren't that pathetically inept.

That’s the first place where a Siri update is needed.

I guess it reads as: too embarrassed to even acknowledge their existence in this context, be ready to dump them all to buy a next gen next year.