AMD launches RX 5000-series graphics cards with 7nm Navi GPUs

AMD has teased its next generation of graphics cards using the Navi GPU as the RX 5000-series, offering performance and power improvements over its Graphics Core Next offerings, with the first RX 5700 card in the range set to arrive this July.

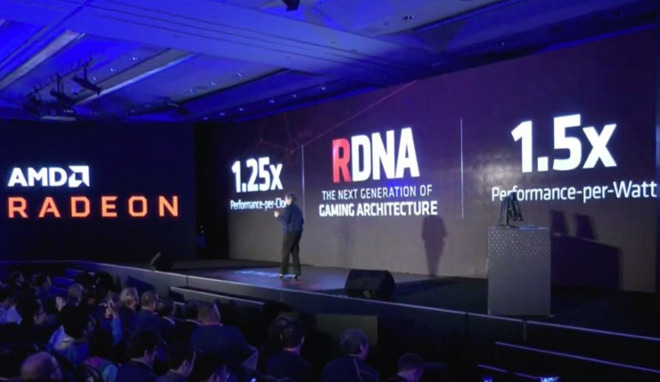

Announced at Computex on Monday during AMD's keynote, the RX 5000 series is using a new architecture called Radeon DNA (RDNA), which will be used alongside the more established Graphics Core Next architecture. As part of the architecture change, the new GPU called Navi will be used, which is said to offer considerable improvements over its predecessors.

The compute units within Navi have been refined from the versions used for Vega, to make each as efficient as possible. In theory, a Navi compute unit will be 25% faster than a Vega equivalent per clock, with a 50% improvement per watt of power used, in part due to AMD's switch to using TSMC's 7-nanometer production process.

At the same time, AMD has performed more refinement of its graphics pipeline to specifically cope with high clock speeds, along with introducing a multi-level cache to minimize bottlenecks.

To accompany the new architecture, AMD is also adding support for GDDR6 memory to the platform, further improving the memory bandwidth from that offered by GDDR5-based cards. Lastly, the GPU supports PCIe 4.0, offering the promise of increased bandwidth on systems that support that connectivity.

AMD's first card using the Navi GPU will be the RX 5700, which it will be launching in July. Details about the card were not advised, but more information will apparently be offered during E3 on June 10.

While AMD is one of two main companies in the graphics card industry worth watching, it is largely the only one of interest for Mac users. Modern Nvidia GPUs are not able to be used on macOS without some considerable effort, as Apple has made the decision to not approve Nvidia's drivers, effectively making AMD's cards the default option.

Announced at Computex on Monday during AMD's keynote, the RX 5000 series is using a new architecture called Radeon DNA (RDNA), which will be used alongside the more established Graphics Core Next architecture. As part of the architecture change, the new GPU called Navi will be used, which is said to offer considerable improvements over its predecessors.

The compute units within Navi have been refined from the versions used for Vega, to make each as efficient as possible. In theory, a Navi compute unit will be 25% faster than a Vega equivalent per clock, with a 50% improvement per watt of power used, in part due to AMD's switch to using TSMC's 7-nanometer production process.

At the same time, AMD has performed more refinement of its graphics pipeline to specifically cope with high clock speeds, along with introducing a multi-level cache to minimize bottlenecks.

To accompany the new architecture, AMD is also adding support for GDDR6 memory to the platform, further improving the memory bandwidth from that offered by GDDR5-based cards. Lastly, the GPU supports PCIe 4.0, offering the promise of increased bandwidth on systems that support that connectivity.

AMD's first card using the Navi GPU will be the RX 5700, which it will be launching in July. Details about the card were not advised, but more information will apparently be offered during E3 on June 10.

While AMD is one of two main companies in the graphics card industry worth watching, it is largely the only one of interest for Mac users. Modern Nvidia GPUs are not able to be used on macOS without some considerable effort, as Apple has made the decision to not approve Nvidia's drivers, effectively making AMD's cards the default option.

Comments

It'll also be interesting to see if these new AMD chips are anywhere near as fast as Nvidia's current chips, which are much faster and run much cooler than AMD's and have done for a number of years. Apple's ridiculous ongoing spats with the only two viable GPU manufacturers is so inane and childish, ever since about 2003 when AMD accidentally leaked details of a new PowerBook, Apple's been back and forth refusing to deal with one or the other. I also have no idea why Apple is refusing to sign Nvidia's drivers, but it's a pretty low blow, especially since Nvidia support cards going back 7 or 8 years and often release Mac versions of their PCIe cards, when AMD doesn't.

Yet again Apple's political position with another company is harming its customers.

The timing of this seems perfect. If they’re launching in July then I’m sure Apple could have received samples by now for testing.

I think it would be good for AMD to appear at WWDC talking about some new pro version of Navi in the new Mac Pro.

I agree, in 2021 or so, this may be a big deal. It isn't today.

As for the whole Thunderbolt 3 thing, both of the new X570 AM4 motherboards listed on the ASRock website are tagged as "Thunderbolt 3" ready, and ASRock offers a TB3 AIC...

I really think Apple has been waiting on TB3 support for the AMD platform(s) & Threadripper 3 for the modular Mac Pro...

Another week & we may know...! ;^p

MplsP said: CPUs are much much more difficult to perform a die-shrink on than GPUs. GPUs are relatively simple compared to CPUs, so optimising for die-shrink is far easier.

I think a switch to AMD CPUs would be very unlikely at this stage. I'm not sure if AMD could provide the numbers that Apple needs, and even if they could, Apple's apparent path toward ARM CPUs would mean that a switch to AMD now would be an awkward step and waste of time for developers to optimise for. That said, 95% of things will just run on AMD CPUs when coming from Intel's x86.

It's not just ray tracing, for general purpose graphics Nvidia is dominating. The top 30 benchmarks on videocardbenchmark.net has AMD appearing just 5 times, and just once in the top 20. Even then, the Radeon VII is still miles behind the Geforce RTX 280Ti.

I'm aware about the general purpose results, but this varies a great deal in regards to workload -- and also not what I was talking about. Ray tracing has loads of potential, but it just isn't a thing right now, and may not be any time soon.

The Radeon Pro cards were really for compute workloads, so they aren't great at more general purpose workloads as shown above, but unfortunately as AMD also seemed to misjudge the market, the Radeon Pro cards now have big chunks of the silicon dedicated to the compute processing which is bogging down the general purpose performance. Perhaps this is partly why Nvidia refuses to support OpenCL, as the hardware support slows the rest of the CPU whereas Nvidia's CUDA doesn't. Navi may resolve this, possibly at the expense of compute performance despite their claims, as obviously only real life results will show.

But yes, I don't think hardware ray tracing is a particularly good metric to compare by right now.

I don't disagree, but it should be noted that OpenCL is on the way out. It all has to do with Metal compute performance now. AMD does a good job at this, just like they did with OpenCL. Arguably this is better for most Apple customers since most creative apps are more compute heavy. AMD also functions well enough for most games and 3D applications. However some 3D modelers may prefer higher realtime graphics performance to compute. Unfortunately most of them have already switched to PC to take advantage of Nvidia and more frequent GPU upgrades since upgradability is currently limited on Macs. Hopefully Apple will be able to win some of these users back with the new Mac Pro.

AMD will be launching their 7nm Ryzen and Threadripper chips soon. However that has more to do with their fab parters than anything AMD is doing on their own.