AMD CEO says Apple's M1 chip is opportunity to innovate, underscores ongoing graphics part...

AMD CEO Dr. Lisa Su sat down with journalists in a Q&A session following a keynote address at CES 2021 on Tuesday, fielding a variety of questions including a request to comment on Apple's first foray into the desktop processor space.

Speaking to press via conference call, Su addressed a range of questions regarding AMD's upcoming plans, the x86 platform and new developments in a highly competitive semiconductor market.

Dr. Ian Cutress of AnandTech focused on the emergence of ARM processor designs. According to Cutress, ARM models are expected to greatly boost compute performance in the coming years and could begin to encroach on territory long held by x86 makers like AMD and Intel. ARM silicon is typically used in specialized deployments like servers, but the chip designs are now starting to show up in consumer products.

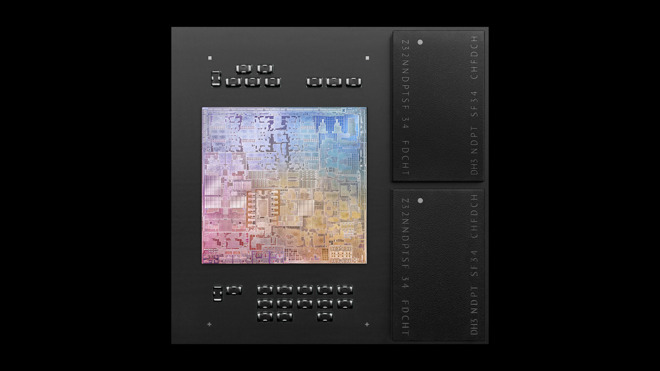

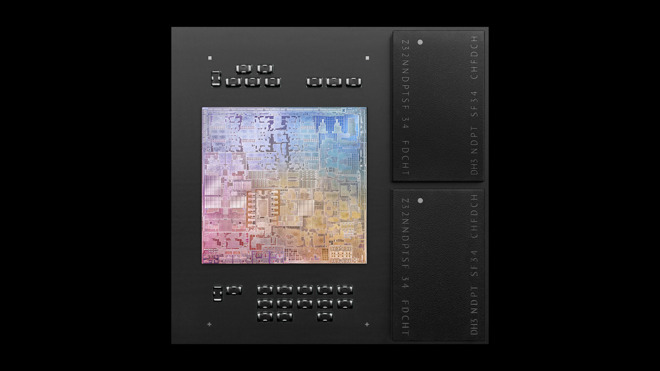

Apple, for example, introduced the M1 chip in its late-2020 13-inch MacBook Pro, MacBook Air and Mac mini models. The tech giant plans its entire lineup of Mac computers to run on custom ARM chips within two years. That presents an immediate loss in revenue for current CPU partner Intel, but also creates headwinds for the wider x86 market.

Su was asked how the M1 will impact AMD's relationship with Apple.

"The M1 is more about how much processing and innovation there is in the market. This is an opportunity to innovate more, both in hardware and software, and it goes beyond the ISA," Su said. "From our standpoint, there is still innovation in the PC space - we have lots of choices and people can use the same processors in a lot of different environments. We expect to see more specialization as we go forward over the next couple of years, and it enables more differentiation. But Apple continues to work with us as their graphics partner, and we work with them."

Apple relies on AMD's Radeon graphics cards to power high-end devices like MacBook Pro, iMac and Mac Pro, but that could change with a shift to in-house solutions. M1 Macs integrate Tile Based Deferred Rendering (TBDR) graphics cores on a system-on-chip design similar to the A-series processors used in iPhone and iPad.

While Apple is content to stick with integrated graphics for the initial wave of M1 Macs, it is possible that the company is working toward a dedicated GPU to better serve high-performance machines.

Apple's transition to ARM appears to be applying pressure to industry incumbents. On Monday, Intel detailed its forthcoming Alder Lake chip series, which seemingly takes a page out of the Apple Silicon strategy book by stretching use cases from mobile to desktop.

Speaking to press via conference call, Su addressed a range of questions regarding AMD's upcoming plans, the x86 platform and new developments in a highly competitive semiconductor market.

Dr. Ian Cutress of AnandTech focused on the emergence of ARM processor designs. According to Cutress, ARM models are expected to greatly boost compute performance in the coming years and could begin to encroach on territory long held by x86 makers like AMD and Intel. ARM silicon is typically used in specialized deployments like servers, but the chip designs are now starting to show up in consumer products.

Apple, for example, introduced the M1 chip in its late-2020 13-inch MacBook Pro, MacBook Air and Mac mini models. The tech giant plans its entire lineup of Mac computers to run on custom ARM chips within two years. That presents an immediate loss in revenue for current CPU partner Intel, but also creates headwinds for the wider x86 market.

Su was asked how the M1 will impact AMD's relationship with Apple.

"The M1 is more about how much processing and innovation there is in the market. This is an opportunity to innovate more, both in hardware and software, and it goes beyond the ISA," Su said. "From our standpoint, there is still innovation in the PC space - we have lots of choices and people can use the same processors in a lot of different environments. We expect to see more specialization as we go forward over the next couple of years, and it enables more differentiation. But Apple continues to work with us as their graphics partner, and we work with them."

Apple relies on AMD's Radeon graphics cards to power high-end devices like MacBook Pro, iMac and Mac Pro, but that could change with a shift to in-house solutions. M1 Macs integrate Tile Based Deferred Rendering (TBDR) graphics cores on a system-on-chip design similar to the A-series processors used in iPhone and iPad.

While Apple is content to stick with integrated graphics for the initial wave of M1 Macs, it is possible that the company is working toward a dedicated GPU to better serve high-performance machines.

Apple's transition to ARM appears to be applying pressure to industry incumbents. On Monday, Intel detailed its forthcoming Alder Lake chip series, which seemingly takes a page out of the Apple Silicon strategy book by stretching use cases from mobile to desktop.

Comments

To work best it also takes a little cooperation from application developers, because there's always some hooks in the OS's APIs which allow apps to indicate whether they prefer to run in the high performance or high efficiency cores. (I remember seeing a description of this API for macOS in a WWDC video, but I couldn't find a link for it to include here.)

As far as I know, so far, only Apple provides an OS for M1-based Macs. And it obviously includes all the OS features including the API hooks to make this work for the two types of cores in M1 chips. But if another OS comes out for M1-based Macs, it's not mandatory that the new OS provides the same features as macOS in terms of either the OS or the apps correctly choosing between the high efficiency and the high performance cores.

[edited to remove political commentary: RadarTheKat, moderator]

Also, as someone above truthfully stated, wait until we get a 10nm octacore chip on Alder Lake architecture, which should occur in 2022. That is when we will FINALLY be able to get an apples to apples comparison (pun not intended) on performance and power.

The Alder Lake CPUs will be primarily used in 2-in-1 ultraportables that will compete with the MacBook Air (except they will have touchscreens, 2-in-1 designs and some will even have 5G) and in gaming laptops. So if you want a 2-in-1 or to play Steam games, you aren't going to by an M1 MacBook Air no matter how much faster it runs or less power it uses because that M1 MacBook Air won't have the features, functionality or ability to run the software that motivates people to buy the Windows and Chromebook (Chromebooks will gain the ability to run Steam this year) competition. When you add the fact that the Alder Lake competition will also be several hundred dollars cheaper than an entry level MBA, this will be still more reasons why the M1 won't change Apple's 7%-8% market share.

Bottom line: more Chromebooks with Intel chips are going to sell in 2021 than Macs with M1 (or M1X or M2) chips. Apple isn't Intel's competition and those of you who insist on believing otherwise are just setting yourselves up for disappointment. My guess is that in 2 years all of these columns on the coming Apple dominance are going to just quietly disappear, just as the ones from 2012 on how iPads were going to crush Windows and 2014 on how Android, Google and Samsung were on their last legs and 2016 on how the iPad had crushed console gaming and Apple was going to buy what was left of Nintendo in order to exclusively feature them on iPads and Apple TV did.

IMO Apple's share will actually fall eventually, as switchers like having Windows availability as a backup. If Windows support for ARM improves, then perhaps things will be different. A fast CPU is great, but no good if you can't run software you need on it, which is the exact issue Apple had in the mid to late 90's with Classic Mac OS. The CPUs were markedly quicker than Intel offerings but the software support was so crap that few outside of a hardcode base used Macs. Intel had similar when trying to push MS and manufacturers to Itanium in the mid-2000s, which is why they were late to the party with x64. The world needs to bite the bullet and switch architectures, IPv6 is a similar switch - it's happening, slowly, which would be the case too if there was a viable non-x86 architecture running Windows at a reasonable speed.

The commonality of CPUs with low and high power cores will push OSs to support it natively with little support from developers required. Much like the dual-GPU Macbooks which auto-switched depending on GPU load.

A quick look at the TDPs for Alder Lake shows Apple has little to worry about as these are usually unrealistic/fraudulent power consumption figures bearing little resemblance to the actual load. Once you can acknowledge this it’s easy to see why Intel hasn’t simply outsourced to a 3rd party fab; because the “Apple to Apples” node comparison won’t work well for them.

Such a comparison is irrelevant anyway as Apple’s key advantage is delegating performance critical tasks to dedicated silicon such as 4K HEVC/ProRes video workflows on their entry-level products.

Apparently, Apple thinks so too.

A GPU core-bump for the M1 should match any AMD mobile offering & I think Apple are more than capable of building high-performance dGPUs to compliment their iGPUs for round-trippable workloads.

There is more to x86 than being some copycat. In fact the A /M series cores have been shown to closely follow the principles laid out by x86 cores in the past on a microarchitectural level. I just believe it would not be convenient to write about it.

Steve’s Apple was about making the best product for the users (including compatibility), Cook’s Apple is about making as much money as possible for his investors. That and using Apple as a political platform are his only concerns.

Where I would give Apple credit is for pushing this approach into the PC market first. To date, the prevailing thought in the PC market was that homogeneous multi-processing was the only way to go for PCs. Apple has demonstrated how their approach greatly impacts overall battery life. Welcome to 2011 Intel.

If you think 2022 Alderlake Intel chips are going to be competitive with 2022 Apple Silicon, then I have a bridge you might be interested in buying.

I'd take that bet. Dual booting into Windows is a novelty that lots of people talk about but few people actually do. People will stay with Mac and possibly even increase in market share provided there are advantages for doing so. There has always been an OS advantage which is what attracts Mac users in the first place. Now, the hardware advantage, particularly for laptops (which sell the most) is so much greater with Apple Silicon, that Apple's share will only grow, not decline as you suggest.

100% agreed.

My initial thought with the Apple's announcement to move to their own silicon is that they's still use AMD for their top end Pro line. After thinking about it some more, I've turned full circle and don't expect they will rely on AMD at all going forward. GPUs are inherently parallel by design and when you rewatch Apple's videos including WWDC, etc. you see that Apple is very interested in demonstrating how scalable their technology is. No, it seems to me that Apple wants to take all of this in-house. This way, they can maximize their optimization for one brand of video cards, etc. Also notice how there is no provision for eGPUs with the new Apple Silicon machines. I think this very clearly signals Apple's intentions.

I'm not thrilled about it as I really like my current setup of notebook for mobility, and eGPU for home working/playing on the same machine. Though they may well be very capable I can't imagine the GPUs in Apple's mobile Macs aren't going to be able to match what can be done with a full size graphics card in an enclosure. Plus, upgradeability is nice too. Hopefully I'll be proved wrong.

The bottom line is that the Mac Pro already has an existing bar that is set. If Apple is going to address this market at all, it's going to have to rise above that bar. Likewise, I'm optimistic about what they will bring to market, if a bit curious as to how they are going to do it.