Hands on with Apple's FaceTime Attention Correction feature in iOS 13

In the latest iOS 13 beta, Apple is leaning into its augmented reality prowess to fix a common eye contact issue had during FaceTime calls. AppleInsider goes hands on and dives into the new feature to find out how it works.

FaceTime Attention Correction fixes your gaze

When making a FaceTime call, a user naturally wants to look at the screen -- at the person they are conversing with. On the other end, the recipient of the call just sees the caller looking down.

When the caller looks at the camera, the recipient sees this as the caller looking them in the eye -- a much more natural point of view. However the caller is no longer looking at the recipient on the screen at that point, which means they can only count on their peripheral vision to see the other person's reactions, rather than a clearer image when viewing normally.

You can see how this looks for yourself by opening the camera app and looking directly at the screen and taking a picture, then looking at the camera and taking a picture.

FaceTime Attention Correction toggle in Settings

With the third beta of iOS 13, Apple added a new toggle for FaceTime Attention Correction. This aims to make it appear as though you are looking directly at a friend during FaceTime call when you're actually looking at the screen.

An iPhone's TrueDepth Camera System

The feature uses the TrueDepth camera system, the same camera system used for Animoji, unlocking the phone and even augmented reality features found in FaceTime.

Taking a look behind the scenes, the new eye contact functionality is powered, of course, by Apple's ARKit framework. It creates a 3D face map and depth map of the user, determines where the eyes are, and adjusts them accordingly.

We don't know with certainty, but Apple is likely using ARKit 3 APIs, which would explain why this functionality is limited to the iPhone XS and iPhone XS Max, and not on the iPhone X, as those APIs aren't supported on earlier models. It doesn't explain the lack of support on the iPhone XR, which uses Apple's beefy A12 Bionic processor.

Slight warping from the augmented reality feature of the attention correction feature

If we take a straight object and move it in front of our eyes while on camera, you are able to see the slight distortion that is a byproduct of the augmented reality addition. There is a bit of a curve around the eyes and even the nose.

The whole thing looks very natural and no one would likely even notice without prior knowledge of the feature. It simply looks like the person calling is looking right at you, instead of your nose or your chin.

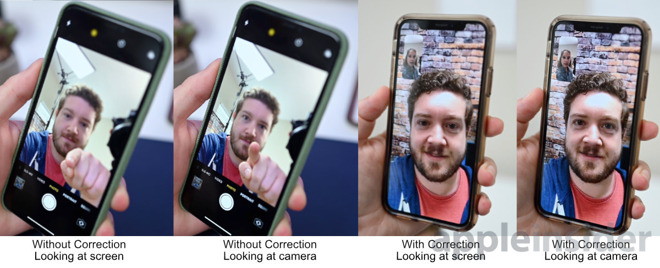

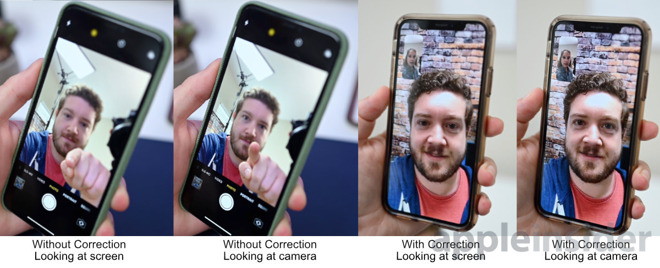

FaceTime Attention Correction example

Here you can see us looking at the screen and the camera within the Camera app, and how when looking at the screen it looks like we are looking down. When we are in a FaceTime call with the feature on, you can see how it looks like we are looking upwards slightly when we look directly at the camera.

FaceTime Attention Correction fixes your gaze

When making a FaceTime call, a user naturally wants to look at the screen -- at the person they are conversing with. On the other end, the recipient of the call just sees the caller looking down.

When the caller looks at the camera, the recipient sees this as the caller looking them in the eye -- a much more natural point of view. However the caller is no longer looking at the recipient on the screen at that point, which means they can only count on their peripheral vision to see the other person's reactions, rather than a clearer image when viewing normally.

You can see how this looks for yourself by opening the camera app and looking directly at the screen and taking a picture, then looking at the camera and taking a picture.

FaceTime Attention Correction toggle in Settings

With the third beta of iOS 13, Apple added a new toggle for FaceTime Attention Correction. This aims to make it appear as though you are looking directly at a friend during FaceTime call when you're actually looking at the screen.

An iPhone's TrueDepth Camera System

The feature uses the TrueDepth camera system, the same camera system used for Animoji, unlocking the phone and even augmented reality features found in FaceTime.

Taking a look behind the scenes, the new eye contact functionality is powered, of course, by Apple's ARKit framework. It creates a 3D face map and depth map of the user, determines where the eyes are, and adjusts them accordingly.

We don't know with certainty, but Apple is likely using ARKit 3 APIs, which would explain why this functionality is limited to the iPhone XS and iPhone XS Max, and not on the iPhone X, as those APIs aren't supported on earlier models. It doesn't explain the lack of support on the iPhone XR, which uses Apple's beefy A12 Bionic processor.

Slight warping from the augmented reality feature of the attention correction feature

If we take a straight object and move it in front of our eyes while on camera, you are able to see the slight distortion that is a byproduct of the augmented reality addition. There is a bit of a curve around the eyes and even the nose.

The whole thing looks very natural and no one would likely even notice without prior knowledge of the feature. It simply looks like the person calling is looking right at you, instead of your nose or your chin.

FaceTime Attention Correction example

Here you can see us looking at the screen and the camera within the Camera app, and how when looking at the screen it looks like we are looking down. When we are in a FaceTime call with the feature on, you can see how it looks like we are looking upwards slightly when we look directly at the camera.

Comments

https://arxiv.org/abs/1906.05378

https://youtu.be/rDUtBZXWrsE

You don't have to license anything if you can prove you're using your own proprietary implementation. You can't patent ideas, you patent the implementation of the idea.

I remember reading a patent about this on AI. We thought it was for FaceTime on Apple Watch to eliminate nostril views. The patent described digital re-animating of the face/eyes to be looking forward. Something like that.

But… even without anything except a basic video stream it is possible to basically calculate your way into getting more or less the same information as you would from a camera solution with actual 3D data. Knowing that it is a video chat we could easily start by identifying that there actually is a face in the video, giving us a very basic facial structure to start with; and then we track how the face moves in relationship to the background to figure out when it's the face or the camera that's moving, and based on knowing how the face is moving in relationship to any light sources we can start tracking how the shadows are moving on the face… Long story short, we can take our basic model of a facial structure and start adjusting it for the measurements of this particular caller (data that we could store in the relationship with the callerID, to speed up the process the next time).

That process would take time and not be as accurate, so it wouldn't be good enough to quickly and securely do FaceID; but with the computing power of modern phones it would easily be good enough for a video chat.

But would it be worth the resources?

Apple basically get this 3D data for free by leveraging hardware in these more pro/luxury/lastest devices, so they don't have to do the hard work and at the same time the users of these newer devices get that warm and fuzzy "I'm better than you"-feeling.

Another argument here would be that sooner or later all devices will catch up with having this new hardware, so why do the hard work for a software-based feature that soon will be obsolete anyways?

Personally I would counter that argument with the iPad mini in its latest update not getting FaceID, so it seems like Apple will stick to using TouchID for their cheaper line of devices… but… of course… you could then get back to that whole idea of this being a perk of having a more expensive device…

Aaand… throughout all this I've actually kinda sorta been assuming that if they implement this in software then they might as well implement it on the sending side; which is an assumption that I've done because to me it'd seem creepy as duck to call people knowing that their devices would adjust my face to make me look more aesthetically pleasing to them.