Apple's in-house chip design is the 'secret weapon' behind industry-beating performance

Apple executives believe that by designing their own Apple Silicon chips and AI, the company now has a significant advantage over traditional chipmakers that have to cater to a wide range of markets and customers.

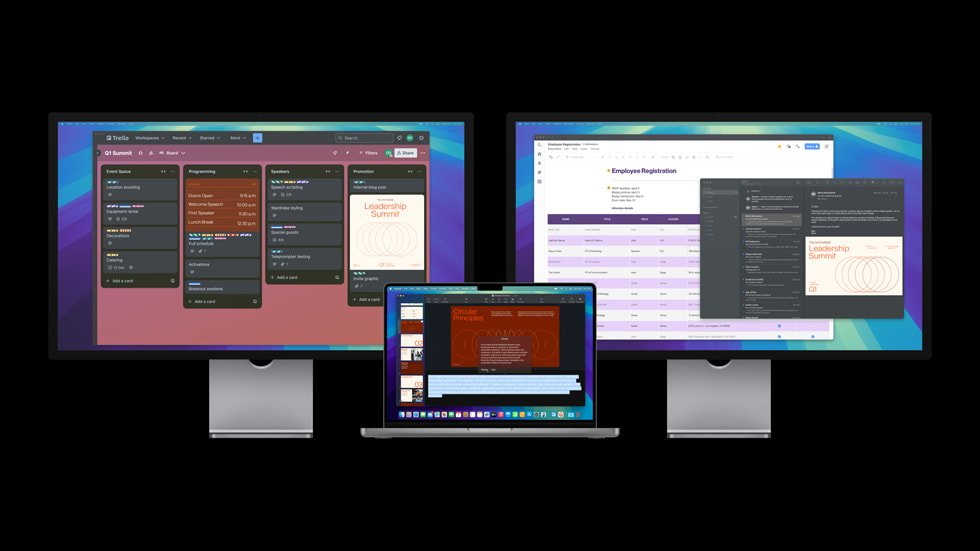

Apple is outpacing the rate of overall industry progress with its M-series chips. Image credit: Apple

Apple's Vice President of Mac Product Marketing Tom Boger and Vice President of Platform Architecture Tim Millet talked about the new M4 line of chips used in recent Apple product updates in an interview with The Indian Express. The company believes doing its own chip design gives it "a tremendous strategic advantage, said Millet.

"We are not a merchant silicon company," he added in encapsulating Apple's advantage. "We do not build chips and sell them to other [companies]."

By creating chips custom-built for the devices they will go into, the company avoids compromises in overall performance. Boger added that "no other platform can touch our power performance per watt. That's the tangible benefit to users."

Industry-leading chip innovation

Boger noted the dramatic increases in performance year-over-year as successive generations of Apple Silicon are released, outpacing the progress rate of the rest of the industry. The new M4, Apple says, brings customers "the world's fastest CPU core, delivering the industry's best single-threaded performance."

The two executives say there is more to the success of Apple Silicon than just delivering speed with minimal energy usage. "We take advantage of the three major components -- the architecture, the design, and the process technology," said Miller.

"Our fourth tool, really our secret weapon, I think, is our ability to co-design these amazing chips with the system teams and the product designers as they are imagining possibilities." Miller pointed to the new M4 Mac mini as an example of this.

"The opportunity was for us and for the design team to be able to come together and build this incredible new platform," he told the newspaper. "There is no way that machine could have come to life without that collaboration. And that is really what Apple is all about."

Miller noted that competing chip manufacturers "can't just go to the latest cutting edge technology like the second generation, three nanometer, but we (Apple) benefit from it in a way that we believe it is worth it. It delivers for us and our products and our customers we are trying to leave nothing on the table."

Boger added that it was rare to see the "pace of innovation year after year after year," noting that the first Apple Silicon chip debuted just four years ago. "That is the promise. That is a commitment we make to our teams to deliver innovations as they are available to us," he said.

The rise of the Neural Engine

Commenting on the rise of artificial intelligence in PC s and Apple's response of Apple Intelligence, Boger noted that there have been "intelligent" features in Macs for years. He noted that Apple first included a Neural Engine in its iPhone chip designs in 2017.

The Neural Engine and M-series chips attract more intensive workload customers. Image credit: Apple

Millet added that "this was inspired by our recognition of the importance of computational photography. We were seeing the amazing research that folks up in the University of Toronto were demonstrating [that] these new neural networks were capable of doing image recognition beyond the capacity of humans, or at least matching, and they were headed on a trajectory that was clear."

"And so we pounced on the opportunity to build that embedded capability into our camera processors for the phone," Millet said. Boger added that the Neural Engine was a core part of the first M1 chip.

"We have a great architecture for AI, and we also have developers taking advantage of Apple silicon to offer our customers intelligent features," he said. "So the M Series chips were always built for AI."

Boger said that his team saw "an interesting paper" in 2017 that discussed transformer networks, now the engine behind Large Language Models (LLMs) used in AI. Boger's team saw that the technology could have a major impact on the Neural Engine, and introduced them into the first M-series chips.

"It shows you the diligence that we spend all our time trying to figure out where the ball is moving," said Miller. "We try to make sure we are there before it gets there."

Innovation driven by users

Boger said in the interview that Apple Silicon continues to push boundaries in performance and energy efficiency "because that's what our customers do." He used the M4 MacBook Pro line as an example.

"For instance, you run the most demanding workload while you have it plugged in, and then you unplug it [and] it is going to give you the exact same performance."

In noting the addition of the M4 Pro and M4 Max chips, Millet said that the memory bandwidth is a key differentiator from the regular M4. "M4 Max has effectively about twice the memory bandwidth of M4 Pro, [which] will help someone who was really pushing the edge for a very, very large model."

Millet said that Apple works closely with software partners "to look for all the best opportunities to accelerate not just generic benchmarks, which we often get judged by, but more importantly by the workloads that we are actually delivering to our customers."

"We know what the hardware system and thermal design will look like, and we understand what the process technology nodes are, and we aggressively pursue our best silicon options," Millet said. "I have been doing this for more than 30 years [and] it is the best situation to be in."

Read on AppleInsider

Comments

It was a mic-drop moment for Apple. The rest of the industry was speechless because 64-bit wasn't expected for a couple more years. Insightful observers theorized that Apple could be on the path to a desktop processor.

So seven years later when Apple announced Apple Silicon, it wasn't that much of a surprise to the rest of the industry or people who paid attention to such things.

It has been very clear that control over the hardware, software, and service stack gives Apple an advantage that none of their competitors in various markets has. This is not "secret" or hidden. It has been in plain daylight for well over a decade.

It's amazing that even in 2024 there are journalists and technologists who still do not get this.

And remember that consumer technology innovation is driven by smartphones, the primary computing modality of consumers in the present day. It doesn't come from PCs. The A-series SoCs begat the M-series SoCs, not the other way around. And all of the major technology innovations we take for granted today -- wireless communications (including WiFi, Bluetooth, 5G+), NFC contactless payments, biometric identification, location services, digital cameras, computational photography/videography, display panel technology, battery technology, performance+efficiency cores, cloud services, etc. -- all have been driven by smartphones.

Google's business model is to track everything you do and sell that data to advertisers. They would benefit the most by having control over smartphone hardware which is evidenced by their continued work on Pixel smartphones. In fact, recent Pixel models are now using a Google Tensor G4 SoC which is now their fourth generation silicon.

My guess is that there are a couple of companies who have prototype smartphone SoCs in their labs. I know little about the Chinese smartphone market but most likely there are several companies (like Huawei) working on it.

It's possible that Amazon is trying to develop their own silicon for handhelds but it may never see the light of day in a shipping device.

Microsoft completely blew it. They were a dominant smartphone platform before the iPhone emerged on the scene. Microsoft fumbled it all away and now they are destined to stand on the sidelines and wistfully watch others lead the way. Consumer technology innovation hasn't been driven by PCs for well over a decade. Remember that Apple was not designing their own smartphone silicon when the iPhone debuted in 2007.

Facebook partially blew it. There was a Facebook smartphone but Facebook abandoned further development over a decade ago.

https://www.cnet.com/tech/mobile/heres-why-the-facebook-phone-flopped/

And now there is evidence Meta is trying to develop custom SoCs mostly for their cloud servers. But rather than be a major player in the smartphone industry, Meta is basically a few apps/services that are popular in some markets and relatively unpopular in others. There's plenty of evidence that shows that Meta's focus is misdirected. They sunk a lot of money into a VR blackhole and their inane "metaverse" B.S. instead of trying to make a dent in smartphones.

We all know it took them until the A12Z to make the Mac Mini with Apple Silicon. I really wonder how well MacOS ran on the A7, which I'm guessing was the first of the first prototypes for Apple to try it.

Sometimes the stock analysts and market gurus mistake Apple's steady perseverance towards achieving goals as an indicator that they are lagging behind. It's always more exciting and enticing for speculators to see companies making bold moves and promises to deliver the moon. Too often they deliver a rock, a slice off the moon, or a beta moon that hangs around for far too long. Apple typically delivers concrete solutions that have immediate consumable value. But Apple isn't immune from making big promises at times and trickling out partial solutions, especially on the software side of things. They're still much better on the hardware side and seem to have been sticking to a plan and execution process that is playing out remarkably well.

Apple Intelligence is, imo, looking more like a trickle-out delivery with a lot of uncertainty about what it really brings to people's everyday life. I have no doubt that it will be a huge step forward, eventually. By limiting its rollout to only the newest of the new hardware iPhone platforms it will take a lot of slick demos and hands-on user experience feedback and personal stories to compel owners of iPhone 14 and older iPhones to upgrade sooner based on the availability of Apple Intelligence. When the iPhone 4s arrived with Siri I remember a bunch of us tech nerds gathered around and asking Siri silly questions. The amusement factor wore off pretty quickly to the point where having Siri on all the newer iPhones, iPads, and Mac was not really a big deal at all. It was still okay, but I expect a fair number of people like myself use it rarely and only for the most trivial things, like skipping a music track or sending a quick text while driving. Apple Intelligence must do better.

The X Elite GPU is < 5TFLOPs, M4 Max is ~18TFLOPs:

https://www.notebookcheck.net/Qualcomm-Adreno-X1-85-4-6-TFLOPS-GPU-Benchmarks-and-Specs.850228.0.html

https://gfxbench.com/device.jsp?benchmark=gfx50&did=119508313

https://gfxbench.com/device.jsp?benchmark=gfx50&did=123984693

A lot of the performance-per-watt comes from TSMC hardware so the same node of chips should get close to the same efficiency but Apple has an advantage with end-to-end hardware and software. They control, the OS, drivers, APIs, hardware, no other manufacturer has this.

They originally wanted Intel to make the chips for the iPhone. If Intel had delivered on this, they probably would have stuck with them but there were too many issues with cost, development speed, IP, performance-per-watt.

https://news.softpedia.com/news/Ex-Intel-Boss-Regrets-Saying-No-to-Steve-Jobs-iPhone-354197.shtml

It took 10 years for Apple's mobile chips to scale to replace Intel chips in Macs, maybe it was planned but I think Intel just failed to deliver and it eventually made no sense to keep using them at all.

Remember how Steve described the iPhone: "the computer for the rest of us." Totally prescient.

While Steve dies in 2011 undoubtedly most of what Apple is today was laid out in long-term roadmaps by him (high level things like Apple Silicon not minutiae like what colors the Apple Watch was going to be offered).

https://www.youtube.com/watch?v=5rN6CEO31gM The Qualcomm current processor is at the bottom in almost all of the testing. The only thing it can claim lasting maybe 30 minutes longer than M4 in one battery test, and It’s behind every Windows laptop and that ain’t good.

6:00 Performance per watt (M2 thru M4)

6:37 (Power draw)

9:00 (wildlife extreme Qualcomm is at the bottom)

16:39 (battery life testing Wi-Fi) M2 thru M4

In the comment section, there are many, very upset Windows users their minds are blown. The M2, M3, and M4 laptops made by Apple, are literally lapping the competition.

This YouTube channel might be the best at testing no nonsense and to the point…..

But it can have a downside for certain applications. Gaming of course is obvious, but also real work that requires grunt, like CAD software. The big players just do not bother with Apple, even though the hardware would be a fantastic marriage with their software.