Apple & EU slammed for dangerous child abuse imagery scanning plans

Security researchers say that Apple's CSAM prevention plans, and the EU's similar proposals, represent "dangerous technology," that expands the "surveillance powers of the state."

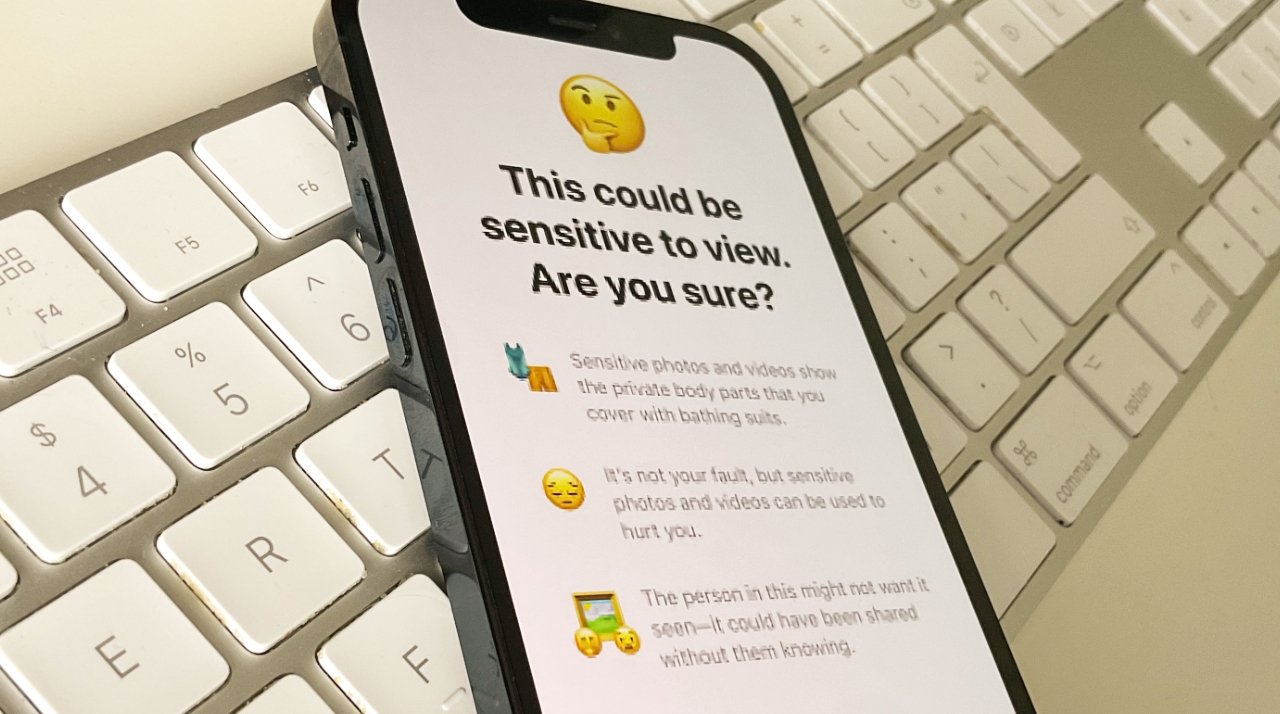

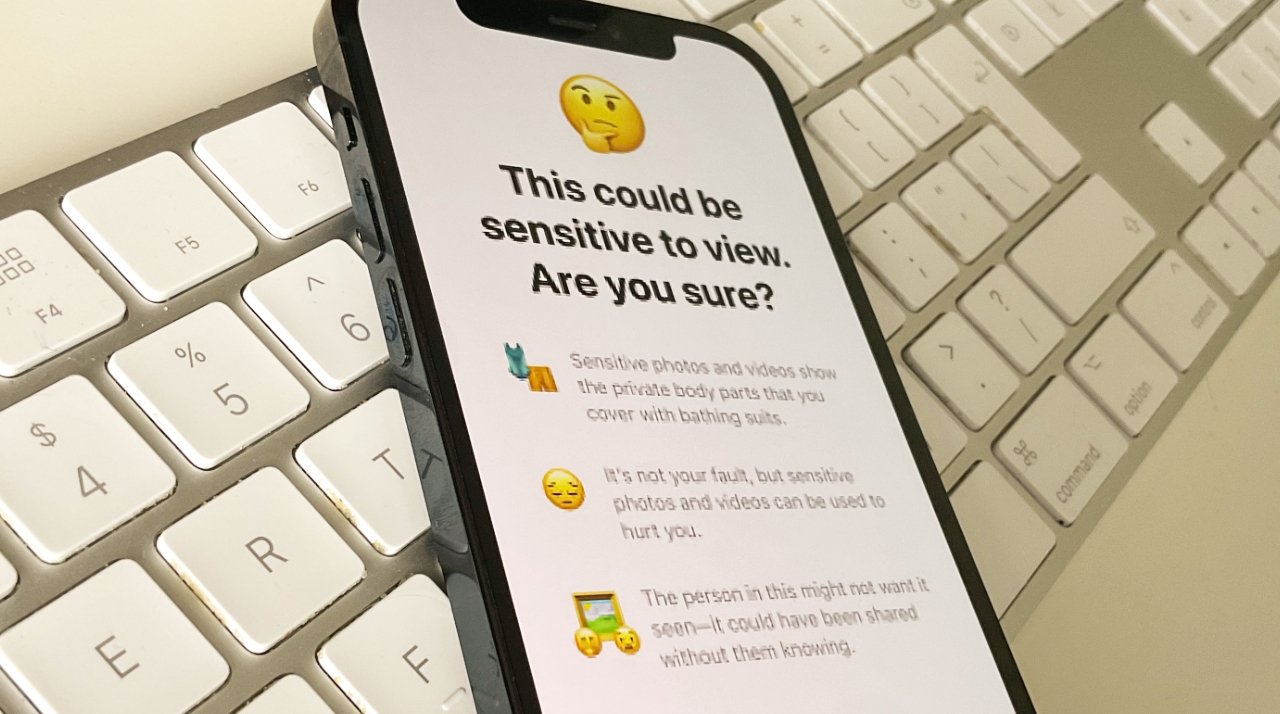

Apple's new child protection feature

Apple has not yet announced when it intends to introduce its child protection features, after postponing them because of concerns from security experts. Now a team of researchers have published a report saying that similar plans from both Apple and the European Union, represent a national security issue.

According to the New York Times, the report comes from more than a dozen cybersecurity researchers. The group began its study before Apple's initial announcement, and say they are publishing despite Apple's delay, in order to warn the EU about this "dangerous technology."

Apple's plan was for a suite of tools to do with protecting children from the spread of child sexual abuse material (CSAM). One part would block suspected harmful images being seen in Messages, and another automatically scan images stored in iCloud.

The latter is similar to how Google has already been scanning Gmail for such images since 2008. However, privacy groups believed Apple's plan could lead to governments demanding that the firm scan images for political purposes.

"We wish that this had come out a little more clearly for everyone because we feel very positive and strongly about what we're doing, and we can see that it's been widely misunderstood," Apple's Craig Federighi said. "I grant you, in hindsight, introducing these two features at the same time was a recipe for this kind of confusion."

"It's really clear a lot of messages got jumbled up pretty badly," he continued. "I do believe the soundbite that got out early was, 'oh my god, Apple is scanning my phone for images.' This is not what is happening."

There was considerable confusion, which AppleInsider addressed, as well as much objection from privacy organizations, and governments.

The authors of the new report believe that the EU plans a similar system to Apple's in how it would scan for images of child sexual abuse. The EU's plan goes further in that it also looks for organized crime and terrorist activity.

"It should be a national-security priority to resist attempts to spy on and influence law-abiding citizens," the researchers wrote in the report seen by the New York Times.

"It's allowing scanning of a personal private device without any probable cause for anything illegitimate being done," said the group's Susan Landau, professor of cybersecurity and policy at Tufts University. "It's extraordinarily dangerous. It's dangerous for business, national security, for public safety and for privacy."

Apple has not commented on the report.

Read on AppleInsider

Apple's new child protection feature

Apple has not yet announced when it intends to introduce its child protection features, after postponing them because of concerns from security experts. Now a team of researchers have published a report saying that similar plans from both Apple and the European Union, represent a national security issue.

According to the New York Times, the report comes from more than a dozen cybersecurity researchers. The group began its study before Apple's initial announcement, and say they are publishing despite Apple's delay, in order to warn the EU about this "dangerous technology."

Apple's plan was for a suite of tools to do with protecting children from the spread of child sexual abuse material (CSAM). One part would block suspected harmful images being seen in Messages, and another automatically scan images stored in iCloud.

The latter is similar to how Google has already been scanning Gmail for such images since 2008. However, privacy groups believed Apple's plan could lead to governments demanding that the firm scan images for political purposes.

"We wish that this had come out a little more clearly for everyone because we feel very positive and strongly about what we're doing, and we can see that it's been widely misunderstood," Apple's Craig Federighi said. "I grant you, in hindsight, introducing these two features at the same time was a recipe for this kind of confusion."

"It's really clear a lot of messages got jumbled up pretty badly," he continued. "I do believe the soundbite that got out early was, 'oh my god, Apple is scanning my phone for images.' This is not what is happening."

There was considerable confusion, which AppleInsider addressed, as well as much objection from privacy organizations, and governments.

The authors of the new report believe that the EU plans a similar system to Apple's in how it would scan for images of child sexual abuse. The EU's plan goes further in that it also looks for organized crime and terrorist activity.

"It should be a national-security priority to resist attempts to spy on and influence law-abiding citizens," the researchers wrote in the report seen by the New York Times.

"It's allowing scanning of a personal private device without any probable cause for anything illegitimate being done," said the group's Susan Landau, professor of cybersecurity and policy at Tufts University. "It's extraordinarily dangerous. It's dangerous for business, national security, for public safety and for privacy."

Apple has not commented on the report.

Read on AppleInsider

Comments

... now the EU knows Apple possesses this capability – it might simply pass a law requiring the iPhone maker to expand the scope of its scanning. Why reinvent the wheel when a few strokes of a pen can get the job done in 27 countries?

“It should be a national-security priority to resist attempts to spy on and influence law-abiding citizens,” the researchers wrote ...

For those who want more details on what the EU may propose read this report commissioned by the Council of the European Union and the recommended Council Resolution for Encryption. https://data.consilium.europa.eu/doc/document/ST-13084-2020-REV-1/en/pdf CSAM as proposed by Apple is a horrible idea IMO.Anyone saying "oh it's no different than Google does in the cloud" or "anyone that doesn't want it just don't use the Photos app" needs to read this.

When the capability exists, overreaching governments will exploit it.

Spotlight literally exists to index all content on the phone. That capability has existed for over a decade. Why haven't we seen any governmental attempt to force reporting if that index shows any document or message containing "bomb" and "president" or "minister", for example?

I still think they should carve iCloud photo sync off into a separate tool, and make it clear the CSAM scanning is only part of that tool. If you want no CSAM scanning on your device, just delete the ability to sync photos to iCloud. Done. Solves everybody's complaints.

iCloud:

https://nakedsecurity.sophos.com/2020/01/09/apples-scanning-icloud-photos-for-child-abuse-images/ ;

MS and Google:

https://www.microsoft.com/en-us/photodna

https://protectingchildren.google/intl/en/

...the only difference was Apple doing the iCloud-photo scan on device prior to syncing the upload to the iCloud server. This actually increased privacy because it wouldn't record any hits unless the threshold for child porn was hit. As is w/ server-side scans, each hit can be logged.

There is no functional difference -- if governments try to mandate other types of scans nothing about it being server-side makes that harder. Arguably, it only makes it easier.

https://www.eff.org/deeplinks/2021/08/apples-plan-think-different-about-encryption-opens-backdoor-your-private-life

Absolutely mind boggling.

Also, I want implicit content rating ASAP. Technology exposes our kids to all manner of information & I’d like potentially objectionable material flagged preferably without homosexuality straw man excuses, we deal with these things as a family.

The one key difference is that server-side scanning could release the precursory hints (any hash hits at all) and associated data whereas the iPhone will release nothing until multiple hits have been confirmed.

That way, even if someone could break into Sally's iCloud storage, he couldn't view images Sally sent in confidence to her boyfriend.

https://www.reuters.com/article/us-apple-fbi-icloud-exclusive/exclusive-apple-dropped-plan-for-encrypting-backups-after-fbi-complained-sources-idUSKBN1ZK1CT

Don't hold your breath.