Apple refining Siri to cut down on mistaken activations, and to draw less power

Apple is researching ways where Siri can be better at assessing whether you really meant to call it, and also when you're done speaking to it.

Siri could become better at only responding when you want it to

You've done this. You've had to hurriedly slap your palm over your Apple Watch because Siri has begun talking. And you've had that perplexing moment of trying to fathom out what you could possibly have just said that sounded anything like "Hey, Siri." Apple wants to change that.

There is also the separate issue of when Siri doesn't respond, or when you turn your Watch and the iPhone in your pocket replies instead, but that's a problem for another day. For now, Apple is focusing on how Siri can decide whether to respond based on how interested you seem.

Apple has previously applied for a patent where the iPhone's Face ID camera interprets your emotions when you ask Siri to do something. But a new patent application starts with whether you're even looking at your device when you speak.

"Attention Aware Virtual Assistant Dismissal," is a newly revealed patent application which both lists criteria for assessing whether you want Siri, and then what the device should do next.

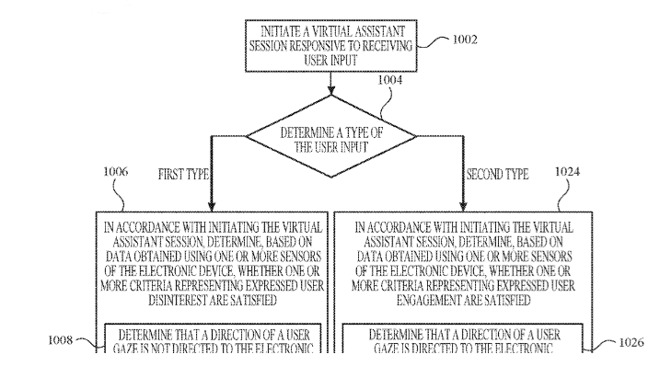

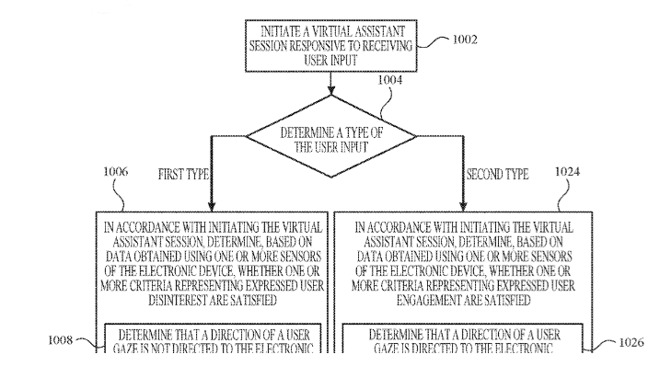

"[The] process includes determining, based on data obtained using one or more sensors of the electronic device, whether one or more criteria representing expressed user disinterest are satisfied," says the application.

There are a lot of factors involved in determining this "disinterest," and effectively in when Siri should take you seriously or not. For instance, a key way of determining whether you're interested is by having the device's cameras look to detect your gaze.

If you're looking directly at the device when you've activated Siri, the iPhone can be pretty certain that you meant to call for it and that you're going to say a command next. However, you might well say "Hey, Siri," and then be momentarily distracted by someone or something around you.

So the patent application discusses the length of time that might or might not be reasonable in working out your attention. Apple refers to this as the "characteristic intensity" of a contact, or in other words, of your saying "Hey, Siri."

"[This] characteristic intensity is, optionally, based on a predefined number of intensity samples," says Apple, "or a set of intensity samples collected during a predetermined time period... relative to a predefined event (e.g., after detecting the contact)."

The patent application avoids specifying actual durations for this delay, preferring instead to say that it varies depending on the situation. The application spends less time on how long a device should wait, and much more on how it can assess intention or disinterest.

As well as whether you're looking at the device, for instance, the criteria for concluding that you're disinterested includes using many different sensors. The device's accelerometer can tell whether you're lowering the device, or picking it up, for instance.

Detail from the patent application showing the start of determining whether a user wanted to activate Siri or not

The system can also determine whether you've placed the device face-down on a surface. Similarly, if you have the display covered by your hand, perhaps as you carry it, then the front-facing light sensor can tell that.

That same sensor can also contribute to the device recognizing whether it is in an enclosed space. If you have your iPhone in a pocket or a bag, for instance, you're less likely to be calling for Siri.

This isn't just about cutting down on the number of times a phrase similar to "Hey, Siri," is followed by your saying something a little more fruity. Apple is also looking to how it can maximize the performance of its devices.

"Operating a digital assistant requires electric power, which is a limited resource on handheld or portable devices that rely on batteries and on which digital assistants often run," it says. "Accordingly, it can be desirable to operate a digital assistant in an energy efficient manner."

So if your iPhone can tell very quickly that you weren't calling for Siri, it doesn't have to activate it pointlessly.

This patent application is credited to three inventors, including Rohit Dasari whose previous work includes related patents such as one about how apps can integrate with a digital assistant.

Keep up with all the Apple news with your iPhone, iPad, or Mac. Say, "Hey, Siri, play AppleInsider Daily," -- or bookmark this link -- and you'll get a fast update direct from the AppleInsider team.

Siri could become better at only responding when you want it to

You've done this. You've had to hurriedly slap your palm over your Apple Watch because Siri has begun talking. And you've had that perplexing moment of trying to fathom out what you could possibly have just said that sounded anything like "Hey, Siri." Apple wants to change that.

There is also the separate issue of when Siri doesn't respond, or when you turn your Watch and the iPhone in your pocket replies instead, but that's a problem for another day. For now, Apple is focusing on how Siri can decide whether to respond based on how interested you seem.

Apple has previously applied for a patent where the iPhone's Face ID camera interprets your emotions when you ask Siri to do something. But a new patent application starts with whether you're even looking at your device when you speak.

"Attention Aware Virtual Assistant Dismissal," is a newly revealed patent application which both lists criteria for assessing whether you want Siri, and then what the device should do next.

"[The] process includes determining, based on data obtained using one or more sensors of the electronic device, whether one or more criteria representing expressed user disinterest are satisfied," says the application.

There are a lot of factors involved in determining this "disinterest," and effectively in when Siri should take you seriously or not. For instance, a key way of determining whether you're interested is by having the device's cameras look to detect your gaze.

If you're looking directly at the device when you've activated Siri, the iPhone can be pretty certain that you meant to call for it and that you're going to say a command next. However, you might well say "Hey, Siri," and then be momentarily distracted by someone or something around you.

So the patent application discusses the length of time that might or might not be reasonable in working out your attention. Apple refers to this as the "characteristic intensity" of a contact, or in other words, of your saying "Hey, Siri."

"[This] characteristic intensity is, optionally, based on a predefined number of intensity samples," says Apple, "or a set of intensity samples collected during a predetermined time period... relative to a predefined event (e.g., after detecting the contact)."

The patent application avoids specifying actual durations for this delay, preferring instead to say that it varies depending on the situation. The application spends less time on how long a device should wait, and much more on how it can assess intention or disinterest.

As well as whether you're looking at the device, for instance, the criteria for concluding that you're disinterested includes using many different sensors. The device's accelerometer can tell whether you're lowering the device, or picking it up, for instance.

Detail from the patent application showing the start of determining whether a user wanted to activate Siri or not

The system can also determine whether you've placed the device face-down on a surface. Similarly, if you have the display covered by your hand, perhaps as you carry it, then the front-facing light sensor can tell that.

That same sensor can also contribute to the device recognizing whether it is in an enclosed space. If you have your iPhone in a pocket or a bag, for instance, you're less likely to be calling for Siri.

This isn't just about cutting down on the number of times a phrase similar to "Hey, Siri," is followed by your saying something a little more fruity. Apple is also looking to how it can maximize the performance of its devices.

"Operating a digital assistant requires electric power, which is a limited resource on handheld or portable devices that rely on batteries and on which digital assistants often run," it says. "Accordingly, it can be desirable to operate a digital assistant in an energy efficient manner."

So if your iPhone can tell very quickly that you weren't calling for Siri, it doesn't have to activate it pointlessly.

This patent application is credited to three inventors, including Rohit Dasari whose previous work includes related patents such as one about how apps can integrate with a digital assistant.

Keep up with all the Apple news with your iPhone, iPad, or Mac. Say, "Hey, Siri, play AppleInsider Daily," -- or bookmark this link -- and you'll get a fast update direct from the AppleInsider team.

Comments

Good idea on handoff for HomePod but it is a few extra steps and it used to work so well with Siri. I've read countless posts with 'cures', none work for me. Weirdly she can play some of the music e.g. my Eagles albums but not my Glen Frey albums. This all started when Apple Music was born which I don't subscribe to. Also after an update or a HomePod restart, everything works like it used to for about five minutes and will play anything... then a short while later... 'Sorry I can't find Glen Frey in your Music Library' again.

I half wonder if it could be related to where I bought the CDs which were ripped into iTunes in the first place. Many were from the UK before I moved here 30 years ago. Perhaps the new algorithms only work now on CDs purchased in the country where you live? I will try to investigate this.

There’s probably a setting for that.

Simple questions, "What time do the Twins play?" works, though inexplicably half the time it wants Minnesota Twins and the rest it doesn't care. But more complex questions, "Is Home Depot open?" almost never give me an answer other than "Here is what I found on the web for..." It should be smart enough to know that I mean my closest Home Depot, etc. the rest of the time it just doesn't understand.

As far as commands, fugettaboutit. "Remind me to take out hamburger when I get home" or "Remind me to turn on the Game at 4:00." is pointless. That sort of command just confuses Siri. I've tried different phrasing, different patterns of the question "Set a reminder for noon to water the plants." Nope, it's always been a hard fail for me.

How about “refining” Siri so that it’s actually useful for more than “five minute timer” and has some capability to respond to CONTEXT??

Can’t do it. It’s still just a pipe dream. Until someone figures out actual intelligence, all of this so-called (and inappropriately named) “AI” is going to keep going nowhere. It’s algorithms, not intelligence. The more the industry obsesses over algorithms for everything [that they don’t want real humans employed to do], the worse things get (looking at you, Google & Facebook).

These “assistants” can’t do much of anything (and only speed up a couple of the few tasks they can accomplish, like “five minute timer”). The more they try to mimic the appearance of human intelligence, the more obviously stupid it looks. “Artificial Stupidity” is the result. It’s the uncanny valley for intelligence simulation.

My biggest Siri complaints are it’s non-usefulness when I have bad/no cell reception (do I really need internet connection to have Siri set a timer) and Siri’s varying abilities per device. So frustrating to ask something on my Watch just to be sent to my phone.

Sometimes I have an issue where I’m intending HomePod to hear me but something else will respond. For instance, I’ll have HomePod Siri to play a song and my iPad, which is sitting on a table nearby will start playing OR my Watch will respond and my phone will start playing even though my wrist is by my side and my phone is in my pocket.

The thing I find most baffling is how different people get different results when asking the same question when phrased the exact same way. I think it was @SpamSandwich who had an issue a couple of years ago. When asking Siri who starred in a particular movie the response was “here’s what I found on the web.” When I asked the exact same way Siri (on my iPhone) replied with who starred in the movie, along with the movie poster, run time and other information about the movie. It’s bizarre.

When will Siri get out of beta??????

Sorry, for the last 30 years or so that line comes to mind every time I hear the word Tesco.