Apple TV+ Dolby Vision HDR streams failing for some viewers

Some users are finding that Dolby Vision HDR Apple TV+ shows streaming to Apple TV 4K set-top boxes aren't playing back correctly, if at all.

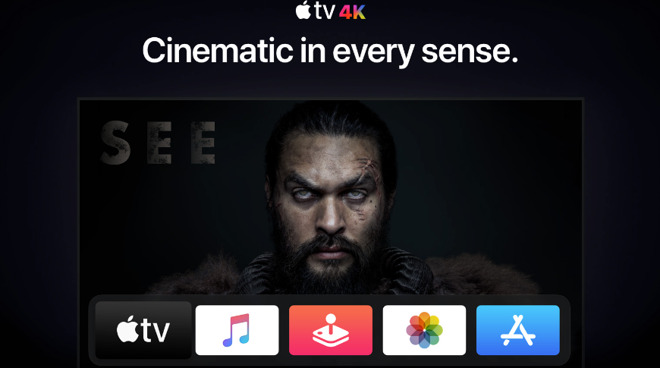

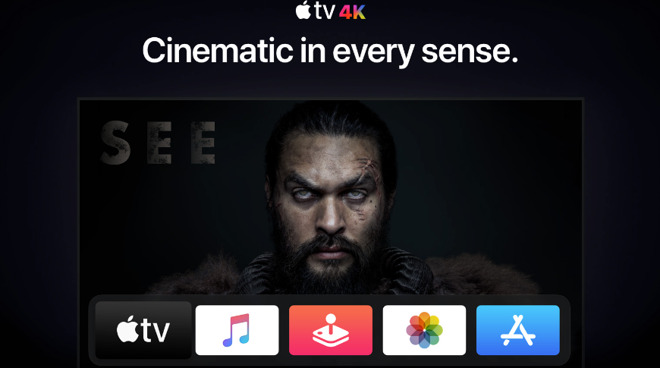

Apple TV+ and Apple TV 4K can play shows in Dolby Vision HDR

Apple TV+ has been noted for providing the highest bitrate of all the streaming services, and Apple itself has talked up how all its shows are made in Dolby Vision HDR for the highest image quality. Users have to have an Apple TV 4K and a TV set that can display Dolby Vision HDR, but now some who do, are reporting that the feature has been switched off.

Currently, about 120 people are complaining about this in the Apple support forums, and more on Twitter, but while the overall issue is the same, the shows affected appears to vary.

MacRumors, which first spotted the issue, also reports that it's not only that new episodes lack this image quality, but so do all their previous episodes.

As those episodes were streamed in Dolby Vision HDR before -- and stream properly to third-party televisions -- it can't be that the source material is lower quality or that the service isn't capable of streaming it.

The most likely explanation is that there is some problem with the service and while it's being fixed, Apple has switched off the higher-quality versions. This doesn't account for "Dickinson" still working for many people, and it's also still possible that only a few users are affected overall.

However, the first reported cases of the problem were almost three weeks ago, and as yet, Apple has not commented. AppleInsider has not as of yet experienced the problem. Perhaps related, one staffer has experienced a recent tvOS update inducing problems with HDCP with a setup that had worked for years prior, suggesting that the device is now more finicky about consistent bitrate across cabling.

Dolby Vision HDR brings high-quality video, and its companion Dolby Atmos provides high-quality audio to match, for many Apple devices:

As well as offering new Apple TV+ shows in this higher image quality, films and television sold through the iTunes Store can now be in Dolby Vision. If you've previously bought HD versions of titles, you now automatically and for free get the Dolby Vision ones.

Apple TV+ and Apple TV 4K can play shows in Dolby Vision HDR

Apple TV+ has been noted for providing the highest bitrate of all the streaming services, and Apple itself has talked up how all its shows are made in Dolby Vision HDR for the highest image quality. Users have to have an Apple TV 4K and a TV set that can display Dolby Vision HDR, but now some who do, are reporting that the feature has been switched off.

Currently, about 120 people are complaining about this in the Apple support forums, and more on Twitter, but while the overall issue is the same, the shows affected appears to vary.

@AppleTV @AppleTVPlus why isn't you own programming claiming to play in Dolby Vision only working in HDR on my Apple TV 4K. Only programming that's working correctly is Dickinson.

-- Anthony (@RealSwaggyT)

MacRumors, which first spotted the issue, also reports that it's not only that new episodes lack this image quality, but so do all their previous episodes.

As those episodes were streamed in Dolby Vision HDR before -- and stream properly to third-party televisions -- it can't be that the source material is lower quality or that the service isn't capable of streaming it.

The most likely explanation is that there is some problem with the service and while it's being fixed, Apple has switched off the higher-quality versions. This doesn't account for "Dickinson" still working for many people, and it's also still possible that only a few users are affected overall.

However, the first reported cases of the problem were almost three weeks ago, and as yet, Apple has not commented. AppleInsider has not as of yet experienced the problem. Perhaps related, one staffer has experienced a recent tvOS update inducing problems with HDCP with a setup that had worked for years prior, suggesting that the device is now more finicky about consistent bitrate across cabling.

Dolby Vision HDR brings high-quality video, and its companion Dolby Atmos provides high-quality audio to match, for many Apple devices:

- An iOS device released in 2018 or later and running iOS 13

- Mac mini 2018

- MacBook Pro 2018, MacBook Air 2018 or later, running Catalina

- Apple TV 4K on tvOS 12 or later

As well as offering new Apple TV+ shows in this higher image quality, films and television sold through the iTunes Store can now be in Dolby Vision. If you've previously bought HD versions of titles, you now automatically and for free get the Dolby Vision ones.

Comments

The episodes are actually in Dolby Vision (which you can check with the Playback HUD, which you can activate via Xcode), but make my Dolby Vision TV switch to HDR.

If I disable "Match Dynamic Range" they play beautifully in Dolby Vision, just as they should.

I read somewhere that some older Dolby Vision TVs had big issues when playing these Apple TV+ shows - that might be why Apple forced the shows ro display in HDR10 instead of Dolby Vision via Apple TV 4K.

The shows still appear as Dolby Vision on my iPad mini.

But we all are getting the data on a shared pipe and your quality is only as good as the weakest link in the system and that could change over time. I see this all the time with any streaming service, Netlix has this issue for years, this is why Netflix starts up and does a stream test to see the speed of your connect, at first it would be fine and half way through the show you can see the quality drop off since somewhere in the path a bottle neck built up and you are not getting as much data as you were in the beginning.

Face it, ENET sucks there is no way to guaranty QOS end to end, the connection path from one end to the other can change many times over during a course of a stream. The tried solving the problem with bigger and faster pipes. It sound like Apple may have turned it off since the down side could be a far worse experience. The fact you only have a small number of the Millions of people watch these shows noticing and complaining means more people have no idea if the quality level is at level it should or could be. It was the same thing back in the early 2000's when HD hit and people were going out and getting HD TV and no idea the content coming from their cable operators was still SD.

Also don't let anyone tell you streaming a 4K Dolby Vision or HDR10+ movie from a streaming service (even Apple's) is the same quality as playing a BlueRay UltraHD disc either. It is not. BlueRay UltraHD is a plainly superior experience, something I did not know would be so evident before being loaned a player and a couple of movies. I''ve now bought one for myself, a refurbed Sony UBP-X700 for less than $80 (Ebay) . What a huge difference with the right content, and easily moved between TV's. Oddly enough tho Dolby Vision BlueRay content does not look better and in fact looks worse to me on the TCL than HDR10 does on the Samsung.

My guess? You can't find one, and certainly not for less than a very good to excellent smartTV costs at the moment.

EDIT: If you find one tell these guys. they can't find one either.

https://hometheaterreview.com/i-just-want-a-dumb-tv/

If you want HomeKit and Airplay 2, Sony just pushed an update to the following models:

Applicable TV model series:

It’s funny. I must have “Match Dynamic Range” enabled to force the ATV 4K to display HDR correctly. It seems without this on HDR isn’t triggered and content will only show in non HDR 4k. Whether is be HDR-10 or Dolby Vision.

FWIW I will sometimes use my Nvidia Shield rather than doing my streaming via the smartTV interface, but my TV will still be collecting various viewing metrics. For that matter my AppleTV does too. Some I can opt out of and some I cannot, and that applies to all the smart services I have as best I can tell. Where I can do so I opt out, but these services have become tricky for that. Not all privacy settings are in the same menus so that even when you think you've opted out of personal tracking altogether you actually haven't. To me that's obviously by design, providers hoping you weren't paying enough attention or perhaps no attention at all.

Even for the privacy-espousing Apple the defaults are "On" for collecting and monetizing very detailed personal use data and like everyone else Apple understands most users don't change defaults.

https://support.apple.com/en-gb/HT208511